Wizard of Oz

In Brief

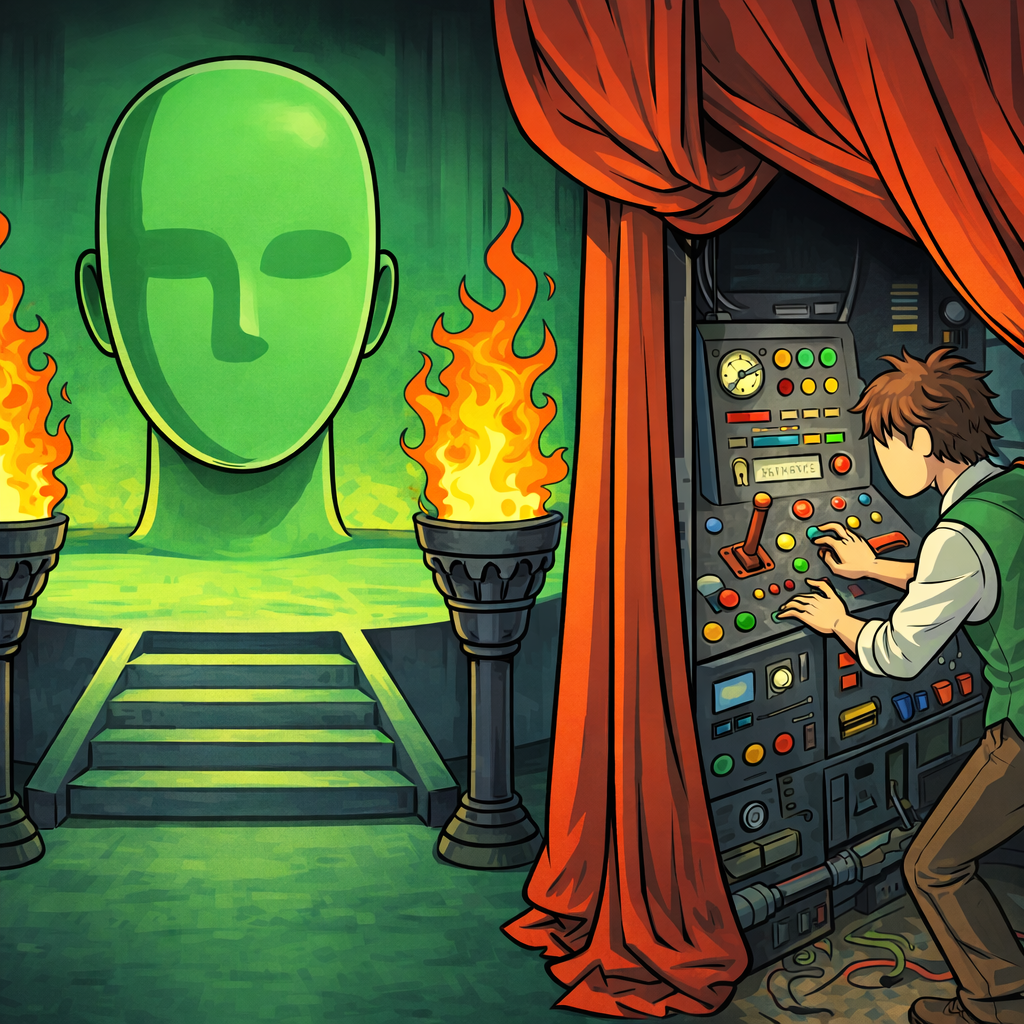

A Wizard of Oz test is a product experience that looks fully built to the customer, but the response on the other end is generated by an operator behind the curtain — usually an LLM under human approval today, sometimes a person typing manually. The customer does not know. You collect real engagement data and qualitative feedback on a finished-feeling experience without committing to the underlying system. The output is two things at once: signal on whether the value proposition lands, and a transcript of what the “wizard” actually had to do — which becomes your spec for the real build.

Common Use Case

You have a clear hypothesis about what the product should do, but the system that would deliver it is expensive or risky to build. Instead, you stand up a polished front-end and let an operator — often an LLM under human review — generate the responses for the first 10–30 customers. You learn whether the experience changes their behavior before you commit to the engineering.

Helps Answer

- Will customers come back, pay, or refer once they actually use this?

- What does the system actually need to do — and how often does each request type come up?

- Where does the experience break down: the inputs, the outputs, or the latency?

- Is this a real product, or is the magic actually the human in the loop?

Description

The Wizard of Oz technique was introduced at CHI ‘83 and formalized in J.F. Kelley’s 1984 ACM TOIS paper, which used it to prototype natural-language interfaces by having a human silently produce the system’s responses on the other side of a terminal. It stayed a niche HCI research method for the next forty years. LLMs changed the math: now the easiest way to fake an intelligent product is to wire it to an actual model and have a human review what comes out.

The 2026 version of the method is mostly a hybrid. The customer sees a real-looking interface. Behind it, an LLM generates the candidate response, and a human operator approves it, edits it, or rewrites it from scratch before it goes out. The operator is still the wizard — they’re just no longer typing every word.

This matters because it changes what you’re testing. A pure-AI prototype tests the model. A pure-human prototype tests the value proposition. A hybrid Wizard of Oz tests the value proposition and shows you exactly where the model would have failed if you had shipped the AI-only version — every override the operator makes is a labeled defect in the eventual automated build.

When to reach for Wizard of Oz vs. just shipping a real LLM-backed prototype:

- Use Wizard of Oz when getting a wrong answer to a real customer would be expensive or harmful, when the failure modes of the underlying system are unknown, when you want to count override rates as a quality bar, or when the system you eventually want is materially harder than what an off-the-shelf LLM can do.

- Use a Vibe-Coded Disposable MVP when an off-the-shelf LLM with a tight prompt is good enough that you don’t need a human in the loop, and you’d rather watch real users in a real product than read transcripts.

- Use a Concierge Test when you don’t yet know the right shape of the product — the customer should know a human is delivering the service, so you can ask “is this even useful?” before you fake the automation.

The legacy case — pure-human wizard, no LLM involved — is still valid in two situations: the system you’re testing is fundamentally not a language task (logistics, physical fulfillment, hardware control) and the customer-visible “magic” is a non-AI service; or you specifically want to evaluate a target capability that current models cannot reach, and you need a human ceiling to define what success would look like.

How to

Prep

1. State the value proposition you are testing.

Write it as a single sentence. “We help [customer] do [task] so they get [outcome] without [the part that’s expensive to build].” If you cannot finish that sentence, you are not ready to run a Wizard of Oz — you are still in problem discovery, and a Concierge Test is the better method.

2. Decide what’s faked and what’s real.

The wizard’s job is the one capability that would be expensive or risky to build. Everything else can be real or stitched together with no-code tools. List the parts of the experience and mark each: real, faked by wizard, or stubbed (placeholder). The fewer pieces the wizard is responsible for, the cleaner your signal.

3. Pick the wizard mode.

Choose one of three:

- LLM-assisted (default). An LLM drafts every response. The human operator reviews, edits, or rewrites before sending. Best for language-heavy products (advisors, summarizers, schedulers, agents).

- LLM-free. The operator types every response. Use this when the task is not a language task, when you want a clean human-ceiling baseline, or when LLM responses would be obviously wrong in this domain.

- Tool-augmented LLM. The LLM plus tool calls (search, calendar, database) drafts a response; the operator reviews. Use this when the eventual product needs to take actions, not just generate text.

4. Build the front-end.

Create whatever the customer will interact with: a web form, a chat interface, an app screen, a Slack bot, an email address. It should look like a finished product. Vibe-code it, use Typeform / Retool / Airtable / V0, or wire up a Slack workspace. The customer should not be able to tell that there’s a person in the loop — that includes latency cues. If your real product would respond instantly and your wizard takes three minutes, build in an explicit “thinking…” or “results within 5 minutes” affordance so the delay reads as design, not as a tell.

5. Build the operator console.

The operator needs three things on one screen: the incoming request, the LLM-drafted response (if applicable), and a single Approve / Edit / Reject control. Log every approval, every edit (with the diff), and every rejection. The edit log is the most valuable artifact this test produces — it’s a labeled dataset of where the eventual automation will fail.

6. Write the wizard playbook.

A two-page document the operator can read in five minutes. It defines:

- The persona the wizard is responding as (tone, voice, length, what they will and will not do).

- A response template for each request type you expect to see.

- An escalation rule: when does the wizard say “I don’t know” or “let me get back to you” rather than make something up?

- A safety rule: what categories of request must be rejected outright (medical advice, legal advice, anything outside the tested value proposition)?

If two operators will share shifts, the playbook is what keeps their behavior consistent.

Execution

1. Recruit 10–30 real users.

The same audience you would target with the real product. Free users will give you fluffy data; paying customers (or customers who give you something costly — time, data, a referral) will give you signal. Set the test window: one to three weeks is typical.

2. Run the test live.

The operator works requests as they come in — drafting (or reviewing the LLM draft), editing, sending. Cover the actual hours your customers are using the product. Do not batch responses overnight unless the real product would also respond overnight; latency is part of the value proposition you are testing.

3. Log everything.

For each interaction, capture: incoming request, LLM draft (if any), final response, edit diff, response latency, operator notes (one line: “had to look up the answer,” “model hallucinated, rewrote from scratch,” “out of scope, escalated”). This log is the test.

4. Watch for drift.

When two operators share shifts, the wizard’s voice will drift. When an LLM generates the draft, the operator can become a rubber stamp (“looks fine, send”). Both kill the data. Spot-check one in five responses against the playbook — if the operator is approving without reading or editing without restraint, retrain mid-test.

5. Run the same diagnostic the real product would run.

If the eventual product is supposed to drive a behavior — a return visit, a payment, a referral — measure that behavior, not just whether the customer enjoyed the response. A Wizard of Oz that produces five-star reviews and zero return visits is telling you the magic was the human, not the value proposition.

Analysis

1. Read the override log first.

Every edit and rejection is a labeled defect. Cluster them: which request types did the LLM consistently get wrong? Which did the human have to write from scratch? Which would have been actively harmful if the customer had received the LLM’s first draft? This is the gap between “we have a working prototype” and “we have a real product.”

2. Compute the override rate.

Approve / Edit / Reject as a percentage of total responses. A 90% approve rate means an off-the-shelf LLM is probably enough — drop the wizard and run a Vibe-Coded Disposable MVP instead. A 30% approve rate means the human is doing most of the work — the eventual product is a much harder build than you thought, and you need to decide if the value proposition is strong enough to justify it.

3. Measure the real behavior.

Did customers come back? Did they pay? Did they refer? Compare to the assumption you wrote down in Prep step 1. Surface-level satisfaction does not validate the value proposition; behavioral commitment does.

4. Estimate the cost of automation.

For each cluster of requests in the override log, estimate what it would take to build that capability: a better prompt, a fine-tune, a tool call, a custom model, or “we cannot build this in the next 12 months.” Multiply by frequency. The clusters that are common AND expensive to automate are where your engineering risk lives.

5. Decide the next move.

One of three things should happen:

- The override rate is low, customers came back, the real behavior is there → ship a real build, no wizard.

- The override rate is high but the value proposition lands → keep the wizard, scale operators, treat the human-in-the-loop as a feature for now.

- The override rate is irrelevant because the real behavior didn’t show up → the magic isn’t real. Stop. Go back to a Concierge Test and figure out what customers actually want.

- Hawthorne effect Customers behave differently when they suspect they are being watched or that the system is not what it appears. Make sure the front-end and the latency are good enough that the user is not actively wondering whether a human is on the other end.

- Operator drift Across a long test window the wizard’s voice changes — they get tired, they get clever, they cut corners. Mid-test recalibration against the playbook keeps the data clean.

- Rubber-stamp bias (LLM-assisted mode) When the LLM drafts every response, the operator stops reviewing carefully. Flag a random 10% of responses for blind double-review by a second person, or the override-rate metric becomes meaningless.

- Anchoring on the first generation Operators tend to keep most of what the LLM produced even when a fresh response would be better. Build “regenerate from scratch” into the console as a one-click option, and track how often it gets used.

- Over-trust in LLM responses Wizards under time pressure approve plausible-sounding wrong answers. Sample-check final responses against ground truth; if the LLM’s hallucination rate is above what you’d accept in production, that’s a finding, not a bug in the test.

- Human-attention false positive Customers respond warmly to anything that feels personally attended to. A high satisfaction score with no return visits usually means you measured the human, not the product. Always pair satisfaction with a behavioral metric.

- Sampling bias A 15-user test with friendly early adopters tells you almost nothing about the broader market. Either pull users from the channel you’ll actually launch in, or treat the result as directional only.

Learn more

Case Studies

Strella

Co-founder Priya Krishnan roleplayed as the AI moderator on Zoom calls in mid-2024 (camera off, deliberately robotic voice, five-second response delay) before any model was wired up. The validation funded the Phase 2 design-partner program and the actual AI build. A textbook 2024-era LLM-free Wizard of Oz of a product that was supposed to be AI from the start.

x.ai (Amy)

Launched an “AI scheduling assistant” where users could CC on emails. In the early days many requests were handled by human operators following scripts, not AI. The transcripts let them see scheduling edge cases before investing in NLP automation. With current LLMs, a re-run of this test would be a hybrid Wizard of Oz with the model drafting the reply and the operator reviewing. Read more

Aardvark

The social-search service ran a Wizard of Oz pattern for the better part of a year before automation, with employees manually answering questions routed through the platform. Acquired by Google in 2010. The historical canonical example of a multi-month operator-staffed wizard test, predating LLMs.

CardMunch

Photographed business cards uploaded by users were transcribed by humans behind the scenes before any OCR was deployed. The team validated demand and built a labeled dataset for the eventual ML model from the same wizard transcripts. Acquired by LinkedIn in 2011. The early-2010s template for “the wizard transcripts are also your training data.”

Zappos

Founder Nick Swinmurn photographed shoes at local stores and posted them online; when someone ordered, he bought the shoes at retail and shipped them himself. A non-language Wizard of Oz: the customer saw an inventory-and-fulfillment system that did not exist. Acquired by Amazon for $1.2B. The canonical reminder that Wizard of Oz is not just an AI method.

Further reading

- J.F. Kelley — An Iterative Design Methodology for User-Friendly Natural Language Office Information Applications (ACM TOIS, 1984)

- Maulsby, Greenberg, Mander — Prototyping an Intelligent Agent through Wizard of Oz (CHI ‘93)

- On LLM Wizards: Identifying Large Language Models’ Behaviors for Wizard of Oz Experiments (ACM IVA 2024)

- Prototyping Multimodal GenAI Real-Time Agents with Counterfactual Replays and Hybrid Wizard-of-Oz (arXiv 2510.06872, 2025)

- The AI of Oz: A Conceptual Framework for Democratizing Generative AI in Live-Prototyping User Studies (Applied Sciences, 2025)

- Concierge vs. Wizard of Oz Prototyping (Kromatic)

- Pay No Attention to the Man Behind the Curtain (Kromatic)

- The Wizard of Oz Method in UX (Nielsen Norman Group)

Got something to add? Share with the community.