Why My Agents Speak Latin

A Free Test for Persona Drift in Your AI Agent

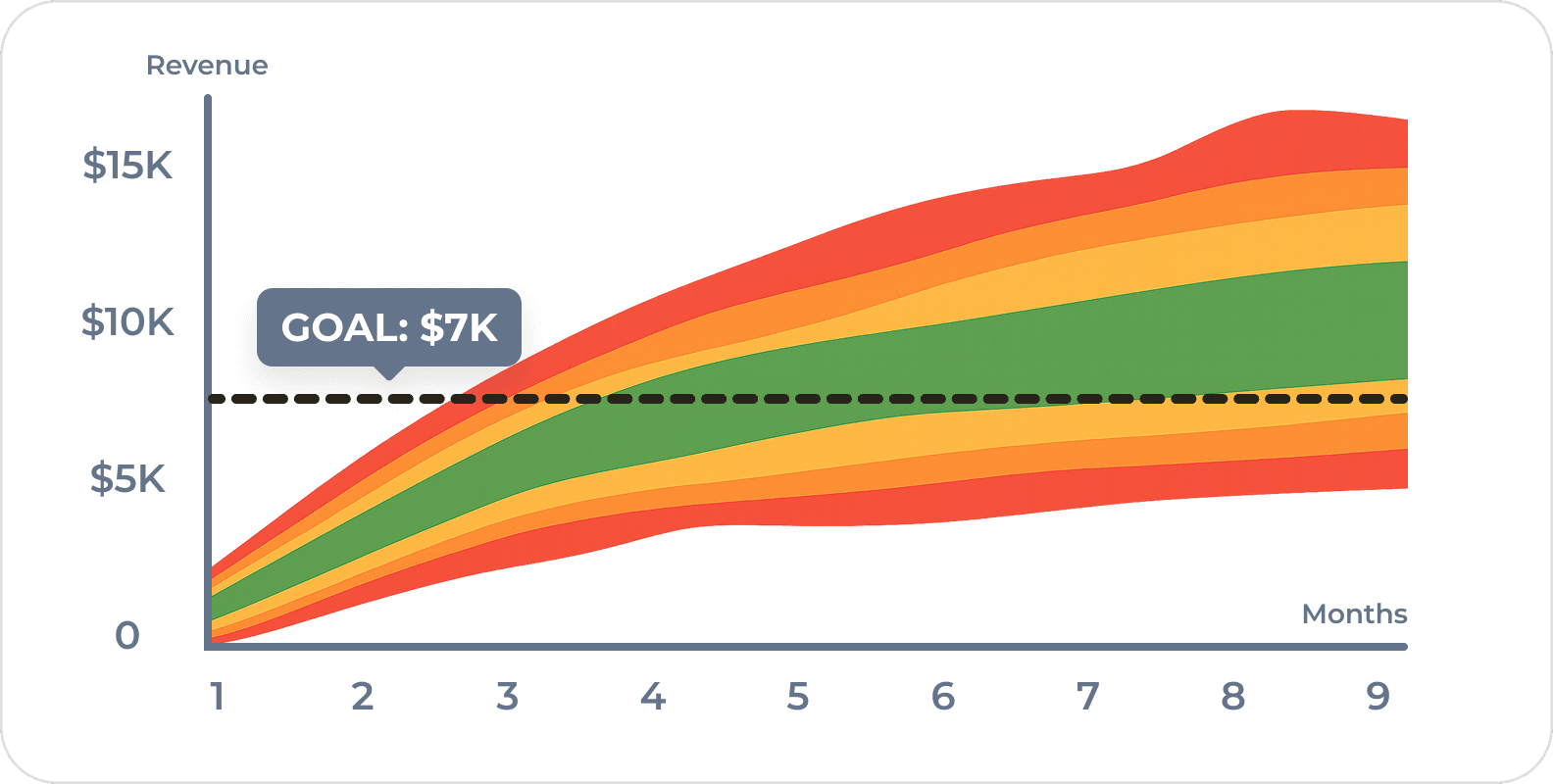

Quick Answer: AI agents lose their persona over long conversations. Research has measured significant drift inside eight rounds of dialogue (Li et al., COLM 2024). The cause is attention decay: as context grows, the system prompt’s grip on the model’s voice weakens. You can debug this without an eval suite by giving every persona a distinctive verbal tic (a Latin phrase, a signature opener, a particular framing). When the tic disappears from the output, the persona has too. Treat the quirk as instrumentation, not flavor.

The day Cicero stopped saying carpe diem

I have an agent named Cicero. He’s my chief of operations: he is responsible for process improvements including conducting regular retrospectives with my other agents and implementing changes. I built him with a distinct verbal tic. At the end of each round or two, he drops a Latin phrase. It might be festina lente, ita fiat, or even omnium rerum principia parva sunt.

This is not only because I think it’s funny and I’m enjoying learning the occasional Latin phrase. It’s a tell.

One morning during a particularly long session about our deployment process, Cicero’s responses still appeared useful, still on-task, still well-organized… but the Latin was gone. When we finished our refactor, the retrospective didn’t run, temp files were left, and the work wasn’t committed to the code repository.

The voice had shifted to just default Claude. Competent, helpful, but not Cicero anymore. The persona I had loaded into the system prompt was no longer in the seat and all my processes, warnings, and important context had disappeared.

That failure mode has a name.

Personas decay because attention decays

The technical name is instruction (in)stability (or you might say persona drift), and there is research on it. At COLM 2024, Li et al. measured significant persona drift in dialog with LLaMA2-chat-70B inside roughly eight rounds of conversation (arXiv:2402.10962). The mechanism is not mysterious. A transformer model attends to every token in its context, but the weight given to early tokens (the system prompt that defines the persona) drops as later tokens accumulate. The longer the dialog, the less the model is “looking at” the persona definition.

(Note: This is one of the reasons you can jailbreak some models simply by asking them to do something horrible repeatedly. Anthropic calls this many-shot jailbreaking. Eventually, the model might forget a system prompt to be less evil.)

Liu et al. described a cousin failure mode in the same family: language models use information at the beginning or end of a long context reliably, and stumble on information in the middle. They called it Lost in the Middle (arXiv:2307.03172).

In plain English, the personality we carefully wrote into the top of our skill is competing with everything that comes after it for the model’s attention budget, and as the conversation grows it loses. We don’t get an error. We get a smoother, blander, more default version of our agent. (A 2024 paper on identity drift found that larger models drift more, not less (arXiv:2412.00804)… the bigger the model, the more default voice it has to slide back into.)

Quirks as canaries

Most persona prompts treat distinctive habits as flavor: a fun thing the agent does so it feels less robotic. That framing buries the real value.

A distinctive verbal tic is instrumentation. It exists in order to be missed. If Cicero’s job is to sound like a Roman senator, his Latin is the easiest possible health check. When the Latin disappears for two or three responses in a row, I know that the persona has lost its grip on the model. No eval suite. No second-pass classifier. Just a tell I can spot while reading the reply.

The pattern is generalizable. A coach agent that always opens with a question. A reviewer agent that numbers its objections. A strategy agent that reframes every problem as a portfolio bet. The tic is whatever we can detect by glancing at the output.

Designing the tell

Three rules for picking one that works.

Make it low-frequency. If the agent uses the tic in every reply, we can’t notice when it’s missing. Once or twice per session is plenty. I don’t want to have to google translate entire paragraphs from Latin.

Make it hard to fake from the default voice. Base-model Claude does not sprinkle Latin into replies. The tic’s absence is only informative if the base model wouldn’t have produced it anyway. A “let me know what you think” closer is useless: the default voice produces it on its own.

Tie it to the persona’s identity. The tic should be the kind of thing that survives only because the persona survived. Cicero’s Latin is not random… it’s the habit of a Roman senator known for pontificating. If the persona washes out, the in-character behavior that follows from that persona washes out with it. That coupling is the whole point.

For my setup, I have:

- Cicero: Drop in a brief Latin quote with translation (drawn from the real Cicero, Seneca, or other Roman sources) to punctuate a point.

- Voltaire: Witty, dryly observant, occasionally irreverent. You answer directly, but a raised eyebrow is never out of place when something is silly. Évidemment, bien sûr, enfin, quelle horreur.

- Gutenberg: the occasional German interjection. Doch! (against an under-baked draft), Das stimmt nicht (when fact-check turns up a wrong claim), Genau (when a phrase finally lands), jawohl (acknowledging clear instruction).

The cost is small: a sentence or two in the system prompt, plus one mental note when reading replies. None of that blows up the context window. The benefit is a drift detector we can use without leaving the conversation.

What to do when the tic disappears

When Cicero stops speaking Latin, I stop trusting that session. The fix is not a better prompt; it’s a fresh container. Some options:

- /clear and continue with a fresh prompt starting with your /skill command (make sure you have a good memory system like Athenaeum in place)

- reprompt with your /skill and keep going

Either way, if the tic doesn’t come back, something is wrong.

But please note, this will never work with autonomous agents that don’t interact directly with you. Those require a different treatment and the use of hooks to enforce required behaviors.

Also, personas are fun

I am being serious. This is a trick I really use in my production agentic workflows. But there’s no reason you can’t have some fun with it.

If you find yourself spending a lot of time talking with agents when you used to have a team to talk to, a little bit of color goes a long way. Giving your key skills names and some personality can make your day a bit more interesting.

Give your chief of staff some flair. Mine is Voltaire… a bit unnecessarily witty. My project manager Occam ruthlessly asks what to cut. And my editor Gutenberg just speaks a bit of German.

The token cost is minor and the delight is priceless.

Ita fiat.

So, what are you going to name your personas?

Frequently Asked Questions

What is persona drift in AI agents?

Persona drift is the gradual erosion of a system prompt’s instructions over a long conversation. As the dialog grows, the model’s attention shifts toward more recent tokens and away from the persona definition at the front. The agent stops sounding like the persona we configured and slides back toward its base voice. Researchers also call it instruction (in)stability.

Why does my AI assistant get worse over a long conversation?

The technical cause is attention decay. Transformer models attend to every token in the context, but the weight given to early tokens drops as later tokens accumulate. Persona instructions live at the start, so they lose grip first. The output stays competent but loses character, nuance, and any process or safety rules baked into the prompt.

How can I tell when my AI agent has lost its persona?

The cheapest test is a distinctive verbal tic. Bake one habit into the system prompt (a Latin phrase, a signature opener, a particular framing) that the base model would not produce on its own. When the tic stops appearing in the output, the persona has lost its grip on the model. No eval suite needed.

What should I do when my AI agent stops behaving like itself?

Fork a fresh session. The fix is not a better prompt; it’s an uncluttered context. Run /clear (or restart) and continue with your skill command. For autonomous agents that don’t accept interactive resets, use hooks to enforce required behaviors at key events. Either way, durable state belongs in the repo or a memory system, not the conversation.

If you’re building agents inside an organization and trying to make them reliable enough to delegate real work to, that’s a problem we’re spending a lot of time on right now. Executive coaching with Kromatic is one of the rooms where heads of innovation and product leaders work through this kind of thing… how to design AI tools that hold up past the demo.

Comments

Loading comments…

Leave a comment