What We Learned Running Our Own Operations on Agentic Memory

Quick Answer: Agentic memory is the infrastructure that lets AI agents (and the humans they work with) carry decisions, sources, and context across sessions. Running our own operations on it taught us four design choices that separate production-grade memory from a single-user wiki: treat sources as first-class objects so every fact carries provenance, compile raw intake through a tiered librarian so multiple agents can write safely to the same wiki, recall passively through a sidecar that fires before the agent has to ask, and keep the what-to-save filter as an editable prompt instead of an opaque black box.

The highest-value agent we have isn’t writing code, making illustrations, or writing tweets. It’s remembering things.

It remembers why we’re building an app, why we picked this feature set, and what our current goals are. It remembers how we work well together, the nuances of our company operating system, and even reminds us to run retrospectives with our agent team.

As the adage goes, when we don’t remember what we’ve done, we tend to repeat it.

A few weeks ago we published “Your AI Agents Have Amnesia, Here’s the Fix”, a post prescribing institutional memory for agents. Since then we’ve done the thing we told everyone else to do. We built the system, open-sourced it, and moved our own operations onto it.

A month in, the benefits are compounding. But we ran into enough roadblocks that we ended up writing our own memory infrastructure and open-sourcing it as Athenaeum. The three biggest issues were:

- Claude doesn’t know what it knows. Sometimes Claude has a memory but never thinks to search for it.

- Claude doesn’t know what to remember. What it chooses to save isn’t always what we’d choose.

- Memories don’t have a source of truth. If we don’t know where a fact came from, we don’t know whether to trust it.

Essentially, Claude wasn’t remembering everything it should, didn’t remember who told it what, and didn’t always think to check its memories in the first place.

This post is about what we learned and how we implemented something that works for us.

Real-world use cases

For Kromatic, we aren’t just logging loose thoughts, articles, and miscellaneous documents. We have over thirteen years of documents, 13,000+ contacts, a full CRM, GitHub repositories, and internal workflows and best practices. Since we’re running multiple autonomous routines to manage applications, fix bugs, prepare newsletters, and more, we needed something relatively robust that could handle some recurring questions:

Why are we doing this? Strategy and goals need to be well documented so an autonomous agent flow (or a hapless human like me who can’t remember what was decided last month) can get up to speed. Every action should link to an overall goal and contribute to it. Actions that don’t should be cut. We want to know what our current strategy and goals are so we can make effective decisions.

What’s our relationship with this person or company? Our CRM had data on who we talked to in the past, but it wasn’t well integrated with our internal documentation, address book, or client list. There was no clear source of truth on what we did, with whom, and what the outcome was. Our client list said one thing, our sales notes said another, and our CRM was just out of date. We want to know what we know about a person or company, and where we know it from, so we can separate fact from fiction.

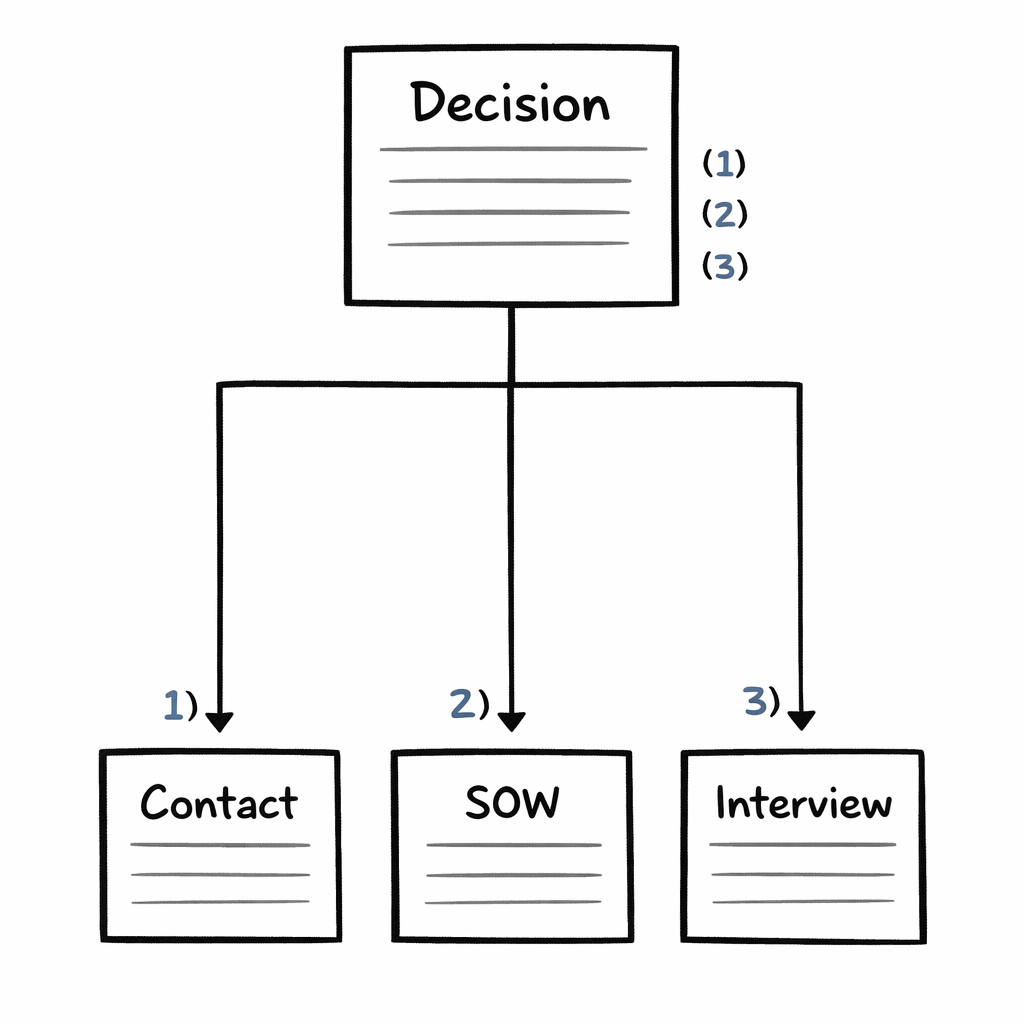

Why did we decide this? We’ve got agents and humans all making decisions. They drift and contradict each other fast if we don’t document who decided what and why. What was the decision, the retro that informed it, and the customer interview that triggered it? We need everything linked and traceable. Settled decisions stop being re-litigated every quarter (or every week!).

What did we learn last time we tried this? Agents (and humans) make mistakes, often. Instead of repeating the same mistake, we run retrospectives regularly and file them. We need to know what worked and what didn’t so we avoid repeating errors.

All four are questions where the cost of not finding the answer is re-doing the underlying work. In the agentic world, the cost of rework is tokens burned and time wasted.

Existing solutions

We didn’t set out to build our own. We tried what was already out there, and each option solved part of the problem without solving the whole thing. Here’s an honest take on each, and where it runs out of room for a team.

Claude’s built-in memory

Claude Code has two ways to carry things across sessions: a CLAUDE.md file you write by hand for rules you want Claude to follow, and an auto-memory feature where Claude saves notes to itself about your preferences and corrections (Claude Code memory docs).

Pros

- Zero setup: just a markdown file

- Human-readable, versioned in git

- Works immediately

Cons

- Built for one person on one machine; nothing crosses to a teammate

- No notion of where a fact came from, so nothing to re-check when it turns out to be wrong

- No way for multiple agents to write safely to the same memory

- Claude still has to think to consult it, and sometimes doesn’t

Anthropic’s memory tool

Anthropic also ships a memory tool aimed at developers building their own agents. It’s essentially a set of basic file operations the agent can call to read and write its own notes. Combined with their context-editing feature, Anthropic reports a 39 percent improvement on their internal agent evaluations.

Pros

- A good low-level building block for people writing their own agents

- Flexible: you decide what to store and how

Cons

- It’s a primitive, not a system. No sense of what a “page” or an “entity” is, and no trust graph

- Nothing built in for multiple agents writing to the same memory

- Still on-demand; the agent has to remember to look

- No team sharing out of the box

Document stores and retrieval (RAG)

The most common answer to “where does our knowledge live?” is a pile of Google Docs, Notion pages, or a shared drive, with retrieval-augmented generation bolted on so an agent can pull relevant chunks at query time.

Pros

- Uses documents people already write

- Agents can find relevant content across a large corpus

- Low initial setup

Cons

- “Where did this come from?” stops at the document, not the claim, so nothing to invalidate when one source turns out to be unreliable

- Retrieval returns fragments, not a single page an agent or human can trust as the current answer

- No clean story for multiple agents updating the same underlying knowledge; documents drift out of sync

- Still on-demand retrieval; only fires when an agent or human explicitly asks

Karpathy’s wiki gist

On April 4, 2026, Andrej Karpathy published a gist describing “A pattern for building personal knowledge bases using LLMs.” The idea: have an LLM compile what it learns into wiki-style markdown files and use Obsidian to browse them. It was good timing (we were working through exactly this problem) and it’s well aligned with what many people were already doing: piling markdown files into folders to give LLMs context.

Pros

- Elegant and simple: the wiki is the memory, no hidden index

- Human-readable and human-editable

- Obsidian’s graph view is a nice match for how the knowledge is shaped

Cons

- Explicitly a personal pattern; Karpathy frames it for individuals, not teams

- No concept of sources as separate, trustworthy objects

- No safe way for several agents to write to the same wiki at once

- The agent still has to think to look, so there’s no passive recall

We took Karpathy’s core idea (the wiki is the memory) seriously. What we added are the pieces a team needs that he deliberately left out.

Agent-memory libraries

There’s now a category of tools that package “memory for agents” as a library or service. The most-cited:

- mem0: positions itself as a universal memory layer, with a research paper behind it and strong numbers on long-conversation benchmarks.

- Letta: commercial continuation of the MemGPT paper, which treats agent memory like an operating system paging information in and out of context.

- Zep: a hosted memory service built on their open-source temporal knowledge graph, Graphiti.

- Cognee: an open-source memory engine that combines vector search with a graph database.

Pros

- Serious retrieval quality; these teams have invested in making lookup fast and accurate

- Strong fit for long-horizon conversations between one user and one agent

- Competitive on published benchmarks

Cons

- Designed around a single user or a single agent; multi-agent collaboration is out of scope or a later add-on

- No real concept of a source and a trust graph; a saved fact doesn’t carry where it came from

- Memory is a lookup layer an agent queries, not a shared workspace humans and agents both live in

- The agent still has to decide to query; none of these fire passively before the model responds

The gap we kept hitting is architectural. These tools answer “given this query, find the right chunk.” They don’t answer “given a stream of inputs from multiple agents and humans, keep a single trustworthy wiki that everyone (agents and humans) can read and write safely.” That’s a different job, and it’s the one a team actually has.

Our solution: Athenaeum

Since nothing we tried solved the whole problem, we wrote our own and open-sourced it so you can copy or modify it. To address our three issues, we made a few specific design choices.

Sources — trust but verify

The single largest change in our thinking was this: an LLM-written fact and a human-cited source are not the same type of thing, and a memory system that treats them as the same thing is unsafe to run a business on.

A real example: while synthesizing some client documentation, Claude hallucinated that we’d worked for the Austrian Federal Forestry Service (Österreichische Bundesforste). This is false. We did talk to them and have a fun debate about how to apply lean startup to growing trees, but it was an exchange of information, not an engagement.

If Claude had later surfaced that claim in marketing material, it would have been a problem for us.

Our fix was to promote sources to first-class objects and require citations for any factual claim. A person page references sources such as a Google contact record. A client engagement cites a contract or SOW. A strategy doc lists the data that informed the decision. If a source is later marked wrong or unreliable, we can see every page that cited it, and fix them.

Essentially, this is a built-in trust graph. Wikipedia works this way, and it’s one of the reasons Wikipedia actually works. The footnote is the unit of trust. An unfootnoted claim is an assertion. We can follow the footnotes and decide for ourselves whether the source is trustworthy.

This matters particularly for agentic work because agents tend to treat prompts (including memory files) as absolute truth, unquestioningly. In this system, a human assertion can be cross-checked against memory files with real sources to tell who’s correct. It prevents either agents or humans from being confidently incorrect.

The librarian — keep the memory palace organized

This has taken a while to get right and still probably needs work. We wanted a structure that was human-readable and allowed progressive discovery for agents: short files with just enough detail to know whether it’s worth digging deeper.

Raw writes to memory get messy. Three facts about the same person surfaced across three sessions shouldn’t become three different person pages. An observation that contradicts an existing page needs a decision. Is the old page wrong, or is this a new context? That decision can’t be made cheaply by the agent that saw the observation, because it doesn’t have the whole wiki in view.

Each entity also carries a stable UID and a list of aliases in its frontmatter, and search weights both well above body text. That’s how “Amanda” still finds Amanda Smith the next time someone drops her first name, and how the librarian knows to merge onto the existing page instead of quietly creating a duplicate.

Our answer is the librarian: a tiered compilation pipeline that runs outside any agent session. Agents can only add to raw intake. They can’t overwrite or delete what’s already there, and they can’t write to the wiki itself. The librarian is the only process that edits wiki pages, and it snapshots the wiki to git before every run, so a bad merge is a git revert away.

- Tier one, programmatic. Normalization, dedup, formatting.

- Tier two, fast LLM. Classification. Is this about a known entity, or a new one? Routes the observation.

- Tier three, capable LLM. The actual compilation. Merges new information with existing pages, resolves simple contradictions, writes the updated entity.

- Tier four, human escalation. Anything ambiguous lands in a

_pending_questions.mdfile for a human decision later.

This is a safety property that comes from structure, not trust. We don’t have to trust our agents to be careful writers, because they can’t be destructive writers.

This is what makes multi-agent, team-scale memory possible. The alternative (every agent editing the wiki directly) relies on hope for consistency.

Passive recollection — a parallel runner in each conversation

Claude doesn’t always check its memories. Sometimes it knows something but doesn’t know that it knows it.

That isn’t how human memory works (we have different problems). We don’t have to remember to remember… memories just leap to the surface, unbidden.

If an agent has to decide, on every turn, whether to call recall (and then decide what to search for, and then read the results, and then decide whether to use them), we don’t have a memory system. We have a knowledge base the agent can query if it happens to think of it.

So we built a sidecar that remembers for the agent and automatically injects the results into context.

Before any user message is processed, the prompt is classified for topics, a fast hybrid keyword-plus-vector search runs across the wiki, and the top hits are injected into the model’s context as knowledge context. The agent doesn’t decide to recall. Recall just happens.

This doesn’t clutter context, because the agent is only given a breadcrumb trail: page names and one-line hooks. It’s the conversational equivalent of knowing immediately that there’s a reference book on the shelf that might be relevant.

It’s also cheap. It’s not running a weighty model, just lightweight topic extraction and a vector lookup.

The result is that our agents can start a new conversation with the right context already loaded. We don’t need to spell out everything in a giant detailed prompt.

Passive observation — the notetaker

Remembering on recall is only half the loop. Something also has to decide what to save in the first place.

Claude’s native memory feature makes that decision behind a fixed, opaque filter. Sometimes it saves trivia (a passing comment about a preference) and misses the context we actually care about, like the rationale behind a decision we just spent an hour debating. There’s no way to fine tune it.

Our notetaker replaces that with a configurable observation filter: a prompt the librarian uses to decide what’s worth writing to raw intake. Because the filter is a prompt and not a black box, it’s easy to inspect, edit, and tune. When we notice memory saving things we don’t care about or missing things we do, we revise the prompt and the next pass gets better.

Better still, the filter itself is a wiki page the agent can edit during a session when we push back on a save, and every change is logged to raw intake as an audit trail. The notetaker learns from feedback in the moment, instead of waiting for us to remember to tune it later.

Between the notetaker and the librarian, important context ends up in memory even when the agent isn’t paying attention, and in a form we can audit and improve over time.

Key takeaways

Three takeaways from running this for real.

Writes are harder than reads. Everyone’s first instinct with agent memory is to build better retrieval. But what breaks quietly at scale is what gets written to the wiki and under what constraints. Add-only intake plus a tiered compiler (with the librarian as the only writer) is a solid architectural choice.

Provenance belongs in the model, not in a comment. If sources aren’t first-class entities, they get added as an afterthought, and you just can’t trust the data. This is suspiciously lacking in most memory models and is odd considering hallucinations are a well known issue.

Recall must be automatic. The default has to be recall-on-every-turn or it’s not functional recall. Recall needs to be automatic and cheap.

None of these are revolutionary. Human brains work much the same way. We need a memory system that works effectively and allows team collaboration.

Frequently Asked Questions

What is agentic memory?

Agentic memory is the infrastructure that lets AI agents carry information across sessions (decisions, sources, people, projects, context) in a form multiple agents and humans can read and write safely. It’s distinct from the model’s context window, from single-user note-taking, and from retrieval over arbitrary documents. In production it has to handle multiple writers, provenance, and passive recall, not just one user chatting with one bot.

How is agentic memory different from RAG?

RAG retrieves relevant text chunks from a document store at query time. It answers “given this question, what text is most relevant?” Agentic memory is the store itself, shaped as compiled entities (people, companies, decisions) with explicit sources and an invalidation path. RAG is a read mechanism; agentic memory is a read path, a write path, and a trust graph. Production systems typically use both.

Can multiple AI agents share the same memory safely?

Yes, but only if the write path is structured. If every agent edits the wiki directly, they overwrite each other and provenance disappears. The safer pattern is append-only intake (agents can only add raw observations) with a separate librarian process compiling those observations into the wiki. Multiple agents write concurrently; consistency is enforced by structure, not by trust.

How is this different from mem0, Letta, or Zep?

Those tools are optimised for one user talking to one agent: a lookup layer the agent queries during a conversation. What we mean by agentic memory is a shared workspace both agents and humans read and write, with sources as first-class objects, multi-agent-safe writes, and passive recall by default. Different problem, different shape. For a single-user chatbot, those tools are a good fit.

What we’re offering

We open-sourced the pipeline and the hooks as Athenaeum, Apache 2.0 licensed. If you’re a team deploying agents and thinking about how memory will work past the first prototype, the examples/claude-code directory will get you started.

The technology is the easy part. The harder part is implementation inside your own organization: what belongs in memory versus what doesn’t, who gets to write to what, how memory decisions get audited, how this fits alongside the knowledge systems you already have. Those aren’t code questions. They’re the strategy questions every team has to answer for themselves, and the answers look different inside a small consultancy than inside a regulated enterprise with an IT security review.

If you’re running a product or innovation program where how do we make agents actually learn across teams is the next twelve months’ problem, that’s a conversation we have a lot. If that’s you, we help teams think through this.

Comments

Loading comments…

Leave a comment