Why AI Mandates Backfire. Use Hooks Instead.

Quick Answer: Top-down AI mandates and AI-usage targets backfire because employees correctly perceive them as cost-cutting and threaten to replace them. Klarna reversed its AI customer-service experiment after quality fell, Duolingo’s CEO walked back his AI-first memo, and Chinese workers are publicly sabotaging the documentation their employers want to feed AI. A better approach: install agents at organizational hooks (events the work already produces, where a junior specialist would help). The hook is benign by design, augments humans where they’re weakest, and earns trust before asking for compliance. Measure outcomes, not adoption.

An AI mandate is a layoff notice with extra steps

Telling people to “use AI” in their jobs is not a winning transformation strategy.

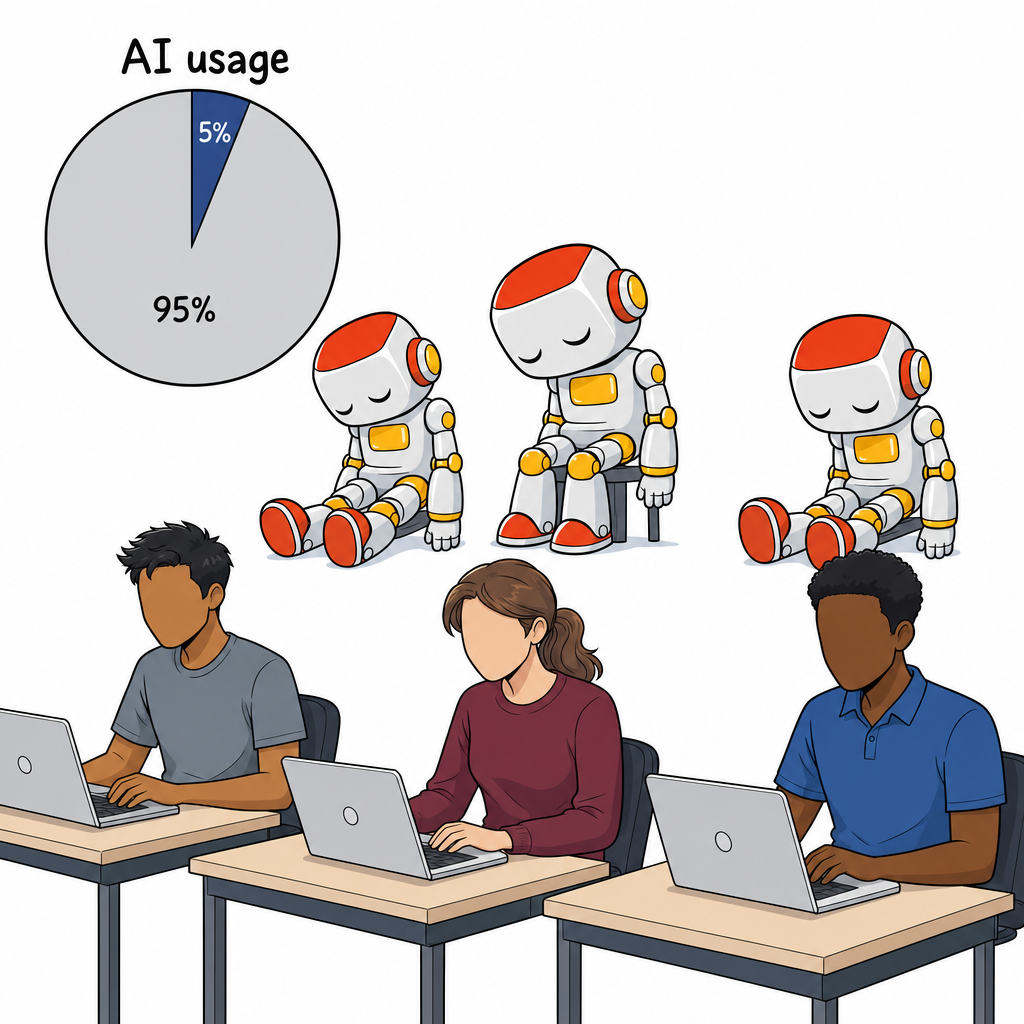

Mandating token use or a percentage of your work that must be automated is a vanity metric that will not get you to the AI promised land of infinite productivity.

Klarna claimed that AI could do the work of 700 customer-service agents in 2024 and publicly resumed hiring humans by 2025 once quality fell. Duolingo’s CEO sent an “AI-first” memo in April 2025 that tied AI use to performance reviews; in April 2026 he walked it back: “I’m not going to force you.” McKinsey reports 94 percent of employees are familiar with generative AI and one percent of company leaders describe themselves as mature on AI deployment.

The most laughable example of what happens when “use AI” becomes a metric is Meta’s unofficial “Claudeonomics” leaderboard, an employee-built dashboard ranking the top 250 internal AI token users. The top spot averaged 281 billion tokens in 30 days, roughly $1.4M of API spend by one person. The dashboard was taken down after two days.

The mandate is everywhere. The economic payoff is not.

Maybe you get people perfunctorily using AI to look up great nearby lunch spots. Maybe you get more lines of code produced (but no real economic impact). Maybe you get AI adoption theatre.

Worst case scenario, your AI initiative is perceived as a cost-cutting measure and a threat to jobs. When that happens, it’s the end of your initiative and the start of trench warfare.

Outright rebellions are already happening as teammates become adversaries and workers hoard knowledge with anti-skill distillation techniques.

This is the default outcome of pushing AI into an organization the way most companies are pushing it. The mandate frames the human as the problem to be solved. People are not stupid. They notice these things.

There is a better hook to use AI to improve performance instead of just trying to cut costs.

AI Adoption Case Study: Sentry’s Seer

A few months ago, I was surprised to see a comment on a pull request (new code that I wrote).

It was surprising because no one else had access to that code base. The comment was from Seer, Sentry’s review agent. I do pay for Sentry, and I’d previously given them access to my GitHub repos for error monitoring. Sentry took advantage of that access to run a Seer review on my code automatically.

Was this a bit weird and intrusive? A little. Also delightful. I got a free code review identifying a bug I hadn’t noticed:

Severity: LOWIt didn’t fix the bug, Seer pointed out a potential problem. It was my choice to deal with it or not. And Seer even gave me a copy paste prompt I could put into Claude or Cursor to investigate and solve the issue.

Essentially, Seer hooked itself into my existing process (a pull request) and gave me value I didn’t even know I needed. It did it in a way I couldn’t ignore, but was not compelled to act on.

This approach is both opt-out (I can turn it off) and empowering. I can choose to act if I think Seer is right and I can ignore it if not. It’s zero friction.

I don’t feel like Seer took my job away or was threatening to do so. (I already outsourced my coding to Claude anyway.) Seer built up trust by being helpful right off the bat. It didn’t claim to always be right, it just suggested further investigation.

That’s a good hook.

The result? I upgraded my Sentry account to continue getting Seer reviews after the beta.

Programmatic hooks

In software engineering, a hook is a small piece of automation that fires at a specific event in a workflow. An engineer makes a change to the software and tests are automatically run. When a new version of the software is pushed to production, an announcement is automatically triggered. Hooks allow you to enforce workflows.

For AI agents, hooks perform a similar function. When an agent tries to send an email on your behalf, a hook can send a notification to you. Or it can block the action as unsafe.

I have a hook that starts any coding session with my agents. It automatically checks if there are other agents working on the folder in question and makes sure there aren’t any leftover files that might be overwritten. It’s a routine cleanup operation and a good practice.

But I can’t trust that an AI agent will do this automatically. As I’ve discovered to my horror, they usually don’t.

I can try to enforce these practices via a prompt or skill file, but that’s a soft enforcement mechanism that they can ignore if the context drifts. A hook is a hard enforcement mechanism that must be obeyed or triggers automatically.

Hooks work because they are predictable, narrow, and tied to a moment.

Organizational hooks

Your organization has similar moments baked into its processes.

- When a new employee is hired, a new laptop is ordered.

- A customer asks for support, a ticket is created.

- A product manager submits a new feature requirement, the engineering team is notified.

The hooks we’re looking for are when some part of a process touches software or a database where we can trigger an agent loop. For example:

- When a new employee is hired, a new email account is created.

- A customer asks for support via email.

- A product manager submits a new feature requirement into Jira.

These moments are ideal places to inject AI in a way that is actually helpful instead of threatening. Based on those triggers, we could easily implement something helpful:

- When a new employee is hired, a scheduling agent sets up 1:1s with team members.

- A customer asks for support, a support agent sends possible solutions to the support team.

- A product manager submits a new feature requirement, a statistical agent designs an A/B test to validate the feature.

These hooks can deliver information or actions that are helpful without being oppressive. You can cancel meetings or search the knowledge base yourself, but why would you? The grunt work is already done.

Take action: three hooks worth installing

Here are some specific hooks worth installing in your organization:

On every new Jira ticket, run a product management review. When a ticket is created, a PM agent reads the spec and flags missing design components, ambiguous acceptance criteria, or gaps in the user story. It posts the gaps as a comment. It does not block the ticket. It just makes the next conversation a sharper one.

When an A/B test is set up, run a statistics review. When a team puts up a test plan, a statistics agent checks the sample size, the success and failure criteria, and whether the comparison is even valid. There’s no reason to still be making product decisions off of misunderstood data. (If you need a refresher on why this is important, Statistics for Product Managers is a useful primer.)

On every demo day, run a note-taking and lessons-learned agent. When the demo session starts, an agent listens, reviews the presentation material, takes notes, and posts the summary back to the team channel afterward. Then it logs detailed notes into the company wiki (or Athenaeum). The presenters are not asked to write up their own demo. The audience is not asked to remember what they saw three weeks later. The institutional memory just shows up.

None of these hooks ask anyone to use AI to replace their core craft. None of them mandate how many tokens you must use per day. None of them require a rollout plan with a target curve. Each hook is one event the organization already produces, and puts a useful specialist in the room for that event.

That is the version of AI adoption that works. Pick the hook. Make it genuinely helpful. Let trust accrue. Add the next hook.

But some watch-outs…

Don’t ask for new permissions

When working in a big org, it’s important that these hooks don’t fundamentally do anything new. Ideally they are just writing comments and giving tips.

The moment your agents start doing real work, you’re going to have to talk to IT security, maybe the legal team, maybe a compliance officer. That will stop any AI adoption effort in its tracks.

I personally tried to help a colleague who worked at a large bank figure out what Microsoft Copilot could do. But it was so locked down that it could either search the web or search internal company documents but couldn’t do both in the same chat or even collate those two data sets. It was shockingly useless.

Your first hooks should be benign by design.

Don’t hook the part of the job people are proud of

Organizations keep trying to get AI to do the core work that humans most enjoy and are proud of.

A designer is asked to just use AI to create slop landing pages instead of crafting a great user experience. An engineer is given requirements to complete and told not to actually write any code. A salesperson is told to have AI spam their client lists.

As Joanna Maciejewska wrote, “I want AI to do my laundry and dishes so that I can do art and writing, not for AI to do my art and writing so that I can do my laundry and dishes.”

Of course people are rebelling.

A good hook augments where the human is weakest, not where they are strongest. To avoid pushback, install AI at the part of the job where humans hate the work, not where they find joy, value, and purpose.

Measure impact, not adoption

Measure what actually matters.

If you find the right hook and pick a use case that actually brings value to your employees, you’ll find fast adoption. But if people don’t actually implement the suggestions your agents make, it won’t make any difference.

So don’t just measure the number of agentic comments (a vanity metric) or what percentage of people read them (slightly better but still not great). Measure the outcome.

- Are there fewer design reviews after you implement an automatic design audit?

- Do new employees have a higher engagement rate after onboarding?

- Are your customers more satisfied and renewing?

Try and just optimize for cost-cutting and you’ll find the quality erodes any efficiency gains. You’ll optimize into a death spiral of lower quality -> lower revenue -> more cost-cutting -> lower quality.

The goal is not to implement AI. It’s to build a better business and more value for your shareholders, your customers, and your teammates.

Frequently Asked Questions

Why do AI mandates backfire?

Employees correctly read mandates as cost-cutting signals. Klarna reversed an AI customer-service rollout after quality fell. Duolingo’s CEO walked back an AI-first memo when staff rebelled. Chinese workers are publicly sabotaging the documentation their employers want to feed AI. The mandate frames the human as the problem to be solved, and people route around it.

What is an organizational hook?

An organizational hook is an event your business processes already produce (a new Jira ticket, a hire, a customer support email) where a small AI agent can fire and do one specific helpful thing. The agent shows up at that event, runs a narrow review or summary, and leaves. People don’t have to remember to use it. They don’t have to learn a new tool.

Where should I not deploy AI in my organization?

Don’t ask people to use AI for the part of their job they’re proud of. Asking a designer to generate slop landing pages or a writer to have AI write their report is asking them to fire themselves. A good hook augments where humans are weakest, not strongest. Hook the chore, not the craft.

How do I measure AI adoption?

Don’t measure tokens used or comments produced. Those are vanity metrics. Measure outcomes. Are there fewer design reviews after the design audit hook? Are new employees more engaged after onboarding? Are customers more satisfied? Tokens consumed tells you nothing about whether the work got better.

What’s an example of an AI hook that already works?

Sentry’s Seer is a code-review agent that runs as a hook on every pull request. It posts a comment with concrete suggestions and copy-paste prompts. It doesn’t block the merge or nag the developer. The developer keeps full discretion. The hook fires at an event the work already produces, and earns trust before asking for compliance.

When this is the right conversation to have

If you are running an AI adoption program inside a large organization and you can already feel your approach not landing, that’s what we’re working on these days. Executive coaching with Kromatic is one of the places heads of innovation and product leaders work through what to hook, what to leave alone, and how to roll out something the team will actually thank you for.

Comments

Loading comments…

Leave a comment