Wizard of Oz Prototype Example: Why Our 82.9% Survey Lied

What happens when everyone says yes but nobody actually does the thing

Pay no attention to the man behind the curtain- Wizard of Oz Prototype Example Wow I’m tired. Tired and energized. In the past few weeks we’ve had the typical ups and downs of every startup. We’ve let in a number of alpha users, got another hundred signed up thanks to a very minor TechCrunch mention, and realized our Minimum Viable Product wasn’t minimum enough. We started building our site with a basic premise that entrepreneurs were willing to post their pitches to attract co-founders and investment. We confirmed this hypothesis through numerous customer interviews and surveys. We iterated on nothing more than Photoshop mockups. We test drove our site with a limited number of alpha users and despite the clunky interface we were pleased by the results. We pushed people through a rather long survey and got an 82.9% completion rate, far higher than we would’ve thought. So we knew that people were willing to fill out some forms. So you can imagine our surprise when our first chunk of 50 alpha users decided not to post any business ideas or pitches. Oops.

Behavior Matters

We now realize that social proof and user experience is going to be more and more critical for us. Without a few hundred people posting ideas and pitches, new people to the site won’t be bothered to post their own. A classic chicken and egg problem which makes our alpha testing more difficult. Also a classic confirmation of the behaviorist maxim:  So aside from pondering whether or not our minds are epiphenomenal to our behavior (yes, I’m a philosophy geek), where does that leave us? Without getting a basic sense of how many people are willing to put in data, we can’t adequately test our other assumptions regarding interaction with the website. So we need to get more basic.

So aside from pondering whether or not our minds are epiphenomenal to our behavior (yes, I’m a philosophy geek), where does that leave us? Without getting a basic sense of how many people are willing to put in data, we can’t adequately test our other assumptions regarding interaction with the website. So we need to get more basic.

Pay No Attention

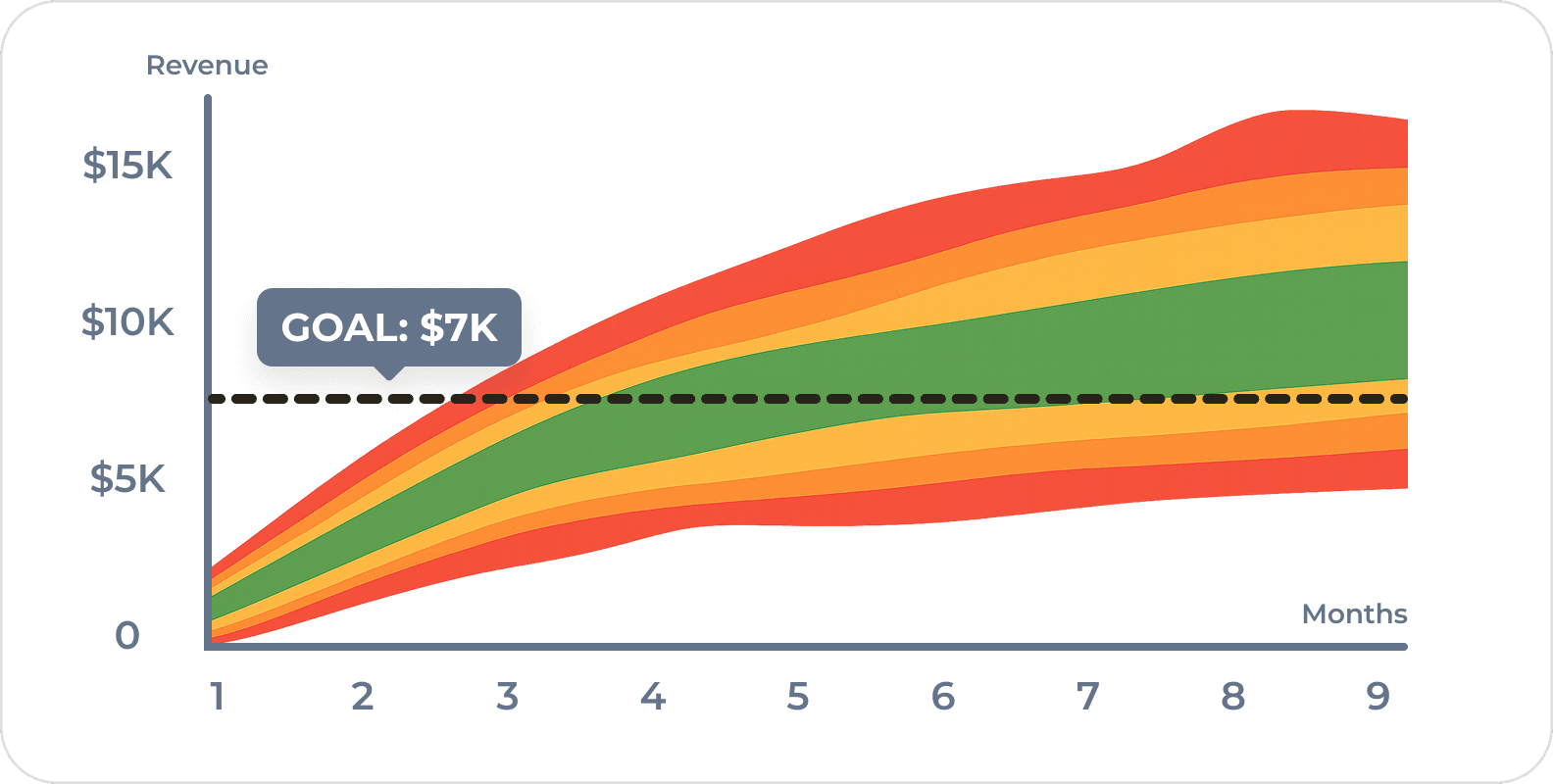

Aardvark allegedly ran for 9 months in Wizard of Oz prototype example mode. Meaning that there were a number of employees sitting around looking up answers to questions manually and punching in the answers. A number of other services seem to do this as well using Mechancial Turk or a small army of outsourcers. The point of doing things that way is to see if someone is willing to use or pay for your service before you waste a lot of time building something obscenely complicated. We thought we were doing that by testing our assumption with customer interviews, mockups, and alpha testing. So we’ve decided to try and simplify even more and focus on the most basic type of co-founder matching. We’ve got a few hundred entrepreneurs. We’re going to match them up one by one. It may take a while. We’re doing this to test out a basic assumption that we skipped before. We always asked if entrepreneurs were willing to post their ideas. What we forgot to ask is, “Is anyone willing to read them?” So far I’ve got a 90% “Yes”. Still, “fool me once…” We’ll test behavior instead of survey responses by sending out pitches via email, directly to people who’ve expressed an interest. We’ll be measuring how many people open the mails and how many people actually click through and read the full story. We hope we’ll find the unsubscribe rate is as low as our monthly mailer (<1%). I’ll settle for <10%. :) Got a pitch? Want in on the action? Go to our home page and signup now!

Aardvark allegedly ran for 9 months in Wizard of Oz prototype example mode. Meaning that there were a number of employees sitting around looking up answers to questions manually and punching in the answers. A number of other services seem to do this as well using Mechancial Turk or a small army of outsourcers. The point of doing things that way is to see if someone is willing to use or pay for your service before you waste a lot of time building something obscenely complicated. We thought we were doing that by testing our assumption with customer interviews, mockups, and alpha testing. So we’ve decided to try and simplify even more and focus on the most basic type of co-founder matching. We’ve got a few hundred entrepreneurs. We’re going to match them up one by one. It may take a while. We’re doing this to test out a basic assumption that we skipped before. We always asked if entrepreneurs were willing to post their ideas. What we forgot to ask is, “Is anyone willing to read them?” So far I’ve got a 90% “Yes”. Still, “fool me once…” We’ll test behavior instead of survey responses by sending out pitches via email, directly to people who’ve expressed an interest. We’ll be measuring how many people open the mails and how many people actually click through and read the full story. We hope we’ll find the unsubscribe rate is as low as our monthly mailer (<1%). I’ll settle for <10%. :) Got a pitch? Want in on the action? Go to our home page and signup now!

Quick Answer: A Wizard of Oz prototype lets you manually deliver a service before building the technology, testing real user behavior instead of relying on stated intent. As product managers, we learned this lesson the hard way — our survey showed 82.9% willingness to post pitches, but when 50 alpha users got access, none actually posted. By manually matching entrepreneurs one by one and measuring email open rates and click-throughs, we could test our core assumption (will anyone actually read these pitches?) through behavior, not opinions.

Frequently Asked Questions

What is a wizard of oz prototype example in a real startup?

A great wizard of oz prototype example is Aardvark, which allegedly ran for 9 months with employees manually looking up answers to user questions before building automated systems. In our own case, we manually matched entrepreneurs one by one instead of building complex matching software, letting us test whether people would actually engage before investing in full development.

Why would users complete surveys but then not use the actual product?

As product managers, we need to remember that what people say in surveys doesn’t always match their real behavior. We had an 82.9% survey completion rate, which gave us false confidence. But when 50 alpha users were asked to actually post business ideas, none did. Stated willingness and actual behavior are fundamentally different things, which is why testing real actions matters more than collecting opinions.

How do you solve the chicken and egg problem with a new marketplace?

Social proof is critical — without existing content, new users won’t contribute their own. We addressed this by going more basic: instead of waiting for users to post pitches organically, we manually matched entrepreneurs one by one and sent pitches directly via email. This let us test engagement behavior without needing a critical mass of content already on the platform.

How do you test assumptions when your MVP isn’t working?

Simplify further. We thought we’d validated demand through customer interviews, mockups, and alpha testing, but we missed a key assumption: whether anyone would actually read posted pitches. So we stripped our test down to email — sending pitches directly to interested entrepreneurs and measuring open rates, click-throughs, and unsubscribe rates. Measuring real behavior, not survey responses, gives us trustworthy signal.

What’s the difference between testing with surveys and testing with behavior?

Surveys capture intent; behavioral tests capture action. We learned this the hard way — our survey showed strong willingness to post pitches, but real users posted nothing. The fix was shifting to behavioral metrics like email open rates and click-throughs, which reveal what people actually do rather than what they claim they’d do.

Comments

Loading comments…

Leave a comment