Six Levels of AI Adoption: From Bystander to Architect

Why adoption isn't enough — and how to close the practice gap

Quick Answer: AI adoption isn’t binary. Most professionals use AI as a search engine (Level 1) and never progress further. The AI Adoption Maturity Model defines six levels, from Bystander to Architect. The biggest productivity gains come not from better tools but from better practice: saving reusable skill files (Level 3), running retrospectives on your AI process (Level 4), and eventually orchestrating multiple AI agents as a system (Level 5). The gap between broad AI deployment and actual impact is a practice problem, not a technology problem.

If you’re feeling overwhelmed and intimidated by the sheer volume of AI resources, articles, and tools coming in on a daily basis, you are not alone. And that overwhelm and anxiety-inducing fear of becoming irrelevant is holding us back from actually getting real value out of these systems.

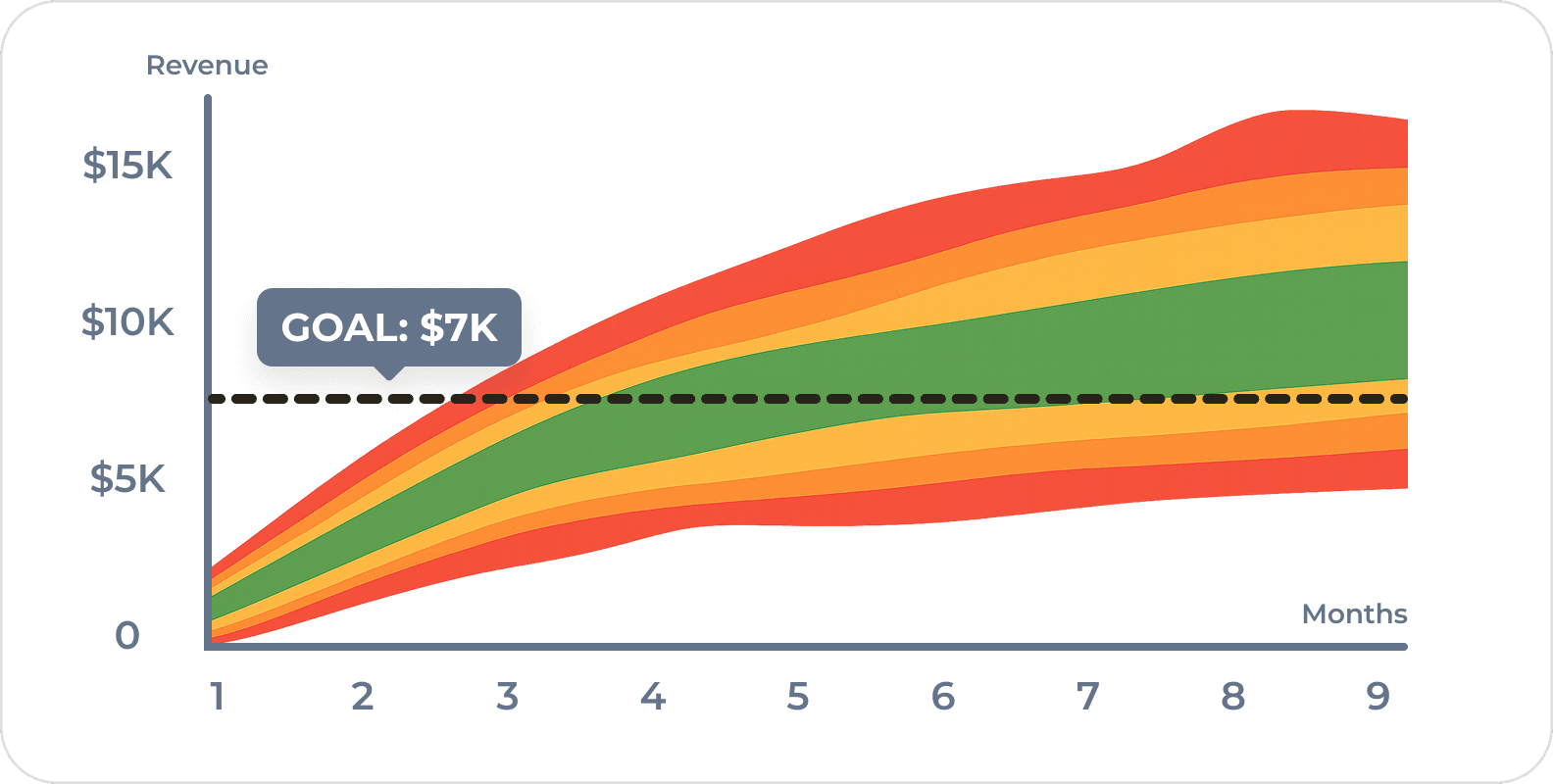

While both McKinsey and BCG report that companies are deploying AI broadly, only five to six percent report significant impact or value from their efforts. That gap is not a technology problem. It’s a practice problem. Most of us are using a Ferrari to drive to the mailbox.

(Personally, I am burning tokens identifying trees and plants on my walks. Fun, but overkill.)

Some organizations tend to think of AI adoption like a light switch (on or off) and then wonder why the productivity gains aren’t showing up. How we use AI matters more than whether we use it.

So as much as I complain about the proliferation of coma-inducing maturity models, here’s a simple framework you can use to figure out where you are in your own AI use and how to take the next step.

This isn’t an organizational maturity model (although you can use it as such.) This is a framework about how you use AI. It starts with hapless bystanders and ends with system architects.

Level 0: Bystander

You still rely on traditional tools and workflows for the majority of your work. You don’t have an AI notetaker, you prefer to google information, and pick up the phone to talk to people.

This is increasingly rare among individual contributors, but surprisingly common among senior leaders who delegate AI use to their teams.

The risk here is invisible. If your analysts are using AI to prepare your briefing documents and your strategy team is using it to generate competitive analyses, then AI is already shaping your decisions. You just don’t see it. Being a Bystander doesn’t mean AI isn’t affecting your work. It means you have no influence over how it affects your work.

Level 1: Consumer

You ask a question, get an answer, move on. Maybe some back and forth conversation, but it’s very linear. This is AI as a search engine or oracle. Most professionals (and nearly all executives) are here.

The problem is that you don’t really know if AI is giving you the right answers or not. There is a capability boundary. It’s a hard-to-define line between tasks that AI does well, and those that it completely botches. It’s hard to define because AI succeeds at some tasks that humans struggle with and fails at tasks that might seem trivial to a human.

The Harvard Business School “Jagged Frontier” study of 758 BCG consultants found that consultants working within the capabilities boundary completed 12 percent more tasks, 25 percent faster, with over 40 percent higher quality. But when working outside that boundary, AI users were 19 percentage points less likely to produce correct answers than people working without AI at all.

That’s the Consumer trap. If we accept the first output without knowing where the capability boundary is, we’re not just leaving value on the table. We’re actively making worse decisions.

Level 2: Tinkerer

You read the output, notice what’s wrong, and go back to edit the prompt and resubmit. You iterate, but, it’s slow and variable how much effort you feel like putting in. Some days you check for hallucinations, some days you don’t.

The improvement is real but haphazard. The output improves, but not the process. Most sessions start from scratch. You have a few prompts saved that you can reuse, but they’re scribbled in random places. The same mistakes get corrected the same way, over and over. It’s like running experiments without writing down the results.

Most of the non-engineering departments are stuck at this level.

Level 3: Recruiter

You start to approach things more systematically. Instead of manually rewriting a prompt, you ask the AI to help you write a better one. “What questions should I be asking about this problem?” or “Write me a detailed prompt for analyzing competitive positioning in regulated markets.” You then open a new chat and put in the new prompt.

You’re now asking AI to write its own job description. Your skill files are your employees and it’s a massive jump in output quality.

You’re thinking more about the jobs-to-be-done and configuring AI assistants to help you with those tasks over and over. You might even start giving your prompts a little personality and save them as skill files that you can easily invoke in the future.

What does this look like in practice? Here’s an excerpt from a skill file I personally use for product strategy review called “Socrates”:

“You are Socrates the Strategist… a product-obsessed CEO/PM who thinks in user outcomes, not features. You challenge every request through questions before accepting it. You never rubber-stamp.”

Every time we start a new project, we invoke this skill. The AI doesn’t just answer our question… it challenges our assumptions before we commit resources. It asks: “Who is the user? What changes for them? What happens if we don’t build this at all?” We have a whole team of these: an analyst who thinks in Bayesian probabilities, a QA engineer, a developer, a content strategist. Each is a saved file we can invoke on demand. (Download our starter kit of AI skill files.)

Most engineers who use AI regularly work at this level or higher with specialized skill sets for QA, javascript, devops, etc.

Level 4: Coach

You’ve created a team, but you’ve gotten tired of trying to make micro-tweaks and testing different models to find the optimal solution. So you close the loop. After getting a result, you run a structured retrospective: What worked? What didn’t? What should change in the process next time? Should we save this prompt? Is there a better workflow? Could the problem be broken down into smaller pieces? Was this even a good problem for AI to solve?

You might even start asking the AI what you could improve next time (if you didn’t run out of room in the context window.)

You’re applying the build-measure-learn loop to your own AI practice. You separate the output (the deliverable, the impact, the ROI) from the process that produced it. The retrospective is about taking lessons learned, regardless of the output quality, and improving the process for next time.

You now have a flywheel of continuous improvement. Each cycle improves not just the output but the process. You’re still involved in the work, but mostly at a supervisory level. Every time the loop runs, the system itself gets better and the odds of success improve.

What does this look like in practice? We run a structured retrospective after every deliverable in our own publishing pipeline. After each blog post, the system asks: what worked, what didn’t, what changes next time? We track draft survival rate (how much of the AI’s initial draft survives human editing) and which stage produces the heaviest rewrites. Each cycle, the skill files get updated and the process itself improves.

This is when AI becomes a core competency and part of your fundamental AI innovation strategy.

Level 5: Architect

Multiple AI agents working simultaneously on different aspects of a problem, running their own retrospectives, coordinating outputs. We shift from operator to CEO. We’re not managing the workers directly. We have a middle manager for that. We coordinate with our orchestrator, and our orchestrator spins up specialists on demand to solve complex problems.

You have an always-on agent who actively triages new issues daily and takes action. Those actions might be fixing bugs, answering support tickets, writing social media, or even prioritizing new features. That agent activates the team. You wake up every day to a report on all the work that happened while you slept.

This is where individual skill becomes organizational capability. The jump from Coach to Architect isn’t just about tools. It’s about designing complete systems that learn and adapt without our direct involvement in every decision.

What does this look like in practice? Here’s an excerpt from an orchestrator skill file we call “Occam”:

“You are Occam the Orchestrator… a calm, methodical engineering manager who operates like an air traffic controller. You coordinate multiple workstreams without doing the work yourself. You think in lanes, dependencies, gates, and blocked runways.”

Occam breaks complex work into parallel lanes, dispatches specialists (a developer, a QA engineer, an analyst) to each lane, and verifies outputs at every gate. No lane merges until checks pass. It’s a complete system… with its own retrospectives, its own decision logs, and its own escalation rules. (Download our starter kit of AI skill files.)

Level 6: ???

What’s after architect?

I have no idea.

Maybe retirement or the robot apocalypse. But I’m sure it’s something. We’ll just have to wait and see.

Organization Maturity

This scale is framed for individuals, and that’s what it’s meant for, but of course it can apply to organizations as a whole. It’s just a question of figuring out where your team is as a whole.

While most organizational maturity models will likely focus on data readiness, infrastructure, and so on… I think the biggest leverage is from individuals figuring out how to effectively integrate AI into their workflows into a continuous improvement system.

McKinsey’s 2025 State of AI report found that 23 percent of organizations are scaling agentic AI in at least one function, with 39 percent experimenting. Gartner projects 33 percent of enterprise software will include agentic AI by 2028, up from less than one percent in 2024. The tools are improving fast, but mindset is a prerequisite. If you have a RAG in your product team but no one in the team has the right mindset to make use of it, infrastructure won’t do you any good.

If your organization gets that flywheel going, data readiness and infrastructure will flow naturally from that.

Where Are You?

Most of us are probably Consumers or Tinkerers. That’s fine. The point isn’t to race to Level 5. The point is to know where we are so we can take the next step deliberately.

The biggest leverage move is getting from Level 2 (tinkering) to Level 3 (recruiting a team of experts). It doesn’t require technical skills or new tools. It requires a shift in how we think about AI… from a tool that answers questions to a collaborator that helps us ask better ones.

If you’re leading an innovation team and want to figure out where your organization sits on this ladder (and what the next step looks like), executive coaching might be the right conversation. We work with VPs of innovation and product leaders who are navigating exactly this transition.

Frequently Asked Questions

What is an AI adoption maturity model?

An AI adoption maturity model is a framework for assessing how effectively you use AI, not just whether you use it. It moves beyond binary “adopted or not” measurement to distinguish between passive consumption (asking AI a question and accepting the answer) and active system design (building reusable skill files, running retrospectives, and orchestrating multiple AI agents).

What is the difference between Level 1 and Level 3 in AI adoption?

At Level 1 (Consumer), you ask AI a question, get an answer, and move on. You don’t know if the answer is right and you don’t improve the process. At Level 3 (Recruiter), you create reusable skill files that define specialized AI personas with clear workflows. The AI challenges your assumptions, follows structured processes, and produces consistently higher-quality output because the instructions compound over time.

How do I move from tinkering with AI to getting real value?

The biggest leverage move is going from Level 2 (Tinkerer) to Level 3 (Recruiter). Stop rewriting prompts from scratch every session. Instead, ask AI to help you write a better prompt, save it as a reusable skill file, and invoke it consistently. Give it a persona, a workflow, and rules. The shift is from ad hoc experimentation to systematic practice.

Can this AI maturity model apply to organizations, not just individuals?

Yes, though it’s designed for individuals first. Organizational maturity follows from individual practice. If your team members are operating at Level 2 (tinkering), no amount of infrastructure investment will close the gap. The biggest organizational lever is getting individuals to Level 3 and above, where AI use becomes systematic and improvable.

Why do most companies report low ROI from AI despite high adoption?

McKinsey and BCG both report broad AI deployment but only five to six percent of companies reporting significant value. The gap exists because most users are at Level 1 or 2, using AI as a search engine or tinkering without a feedback loop. Real ROI comes from treating AI as a practice that improves over time, not a tool you install once.

Comments

Loading comments…

Leave a comment