Experiment Knowledge Management: Your AI Agents Have Amnesia

Stop rediscovering old research and experiments. Your agents and your product team both need a reliable knowledge base.

Quick Answer: A RAG (retrieval-augmented generation) system indexes your past experiments so that both your team and your AI agents can retrieve validated learnings instantly. Instead of repeating expensive research or rediscovering old mistakes, each new experiment builds on the last. At minimum, each record should capture the question, the data collected, the plan, and the results and insights. A basic version can be built in a day using off-the-shelf tools with no engineering team required.

AI agents are about to multiply the rediscovery tax inside organizations.

We know that organizations that don’t learn will always struggle to compete against agile ones.

That’s always been true.

What’s new is that the learning loop companies strive for is no longer just two-pizza teams running retrospectives and sharing learnings in sprint demos. LLM agents are moving into the workforce and replicating organizational design challenges at scale.

Agents forget. A new session is a brand new brain. How many times is an agent answering the same question for different people? How many identical research reports is it creating?

Are agents running retrospectives? Are they learning from their mistakes? Are they sharing information with their colleagues (and with us?)

To survive in an AI-first world, organizations will have to adapt the practices that propelled learning organizations like Toyota and Amazon to sustained competitive advantage. At a minimum, the product management organization should be building a vector-based database of institutional knowledge that agents (and humans!) can actually learn from.

For the AI world, that’s called Retrieval-Augmented Generation (RAG). It’s a critical tool for a product management team.

The Learning Loop Has a New Failure Mode

Most organizations have historically failed at knowledge management.

Every innovation team has paid the rediscovery tax. A new team member joins. A new sprint begins. A new AI assistant gets spun up. And somewhere in a shared drive, in a deck from two years (or perhaps two weeks) ago, is the exact experiment that already answered the question everyone is now arguing about.

(The number of times I’ve personally had to reaudit our newsletter experiment log defies belief.)

The rediscovery tax used to scale with headcount. Now it scales with AI usage.

Every time an agent starts a new session, it begins with a blank slate. Depending on what tier plan and LLM you’re using, you might have seen “memories” popping up to retain critical information. But those memories don’t automatically port to other agents or other coworkers. The memory stack is still in its infancy.

If ten agents are running across a product organization, there’s a real chance that the rediscovery tax is being paid ten times simultaneously. So you might be moving fast and breaking things, but you might just be breaking the same thing repeatedly.

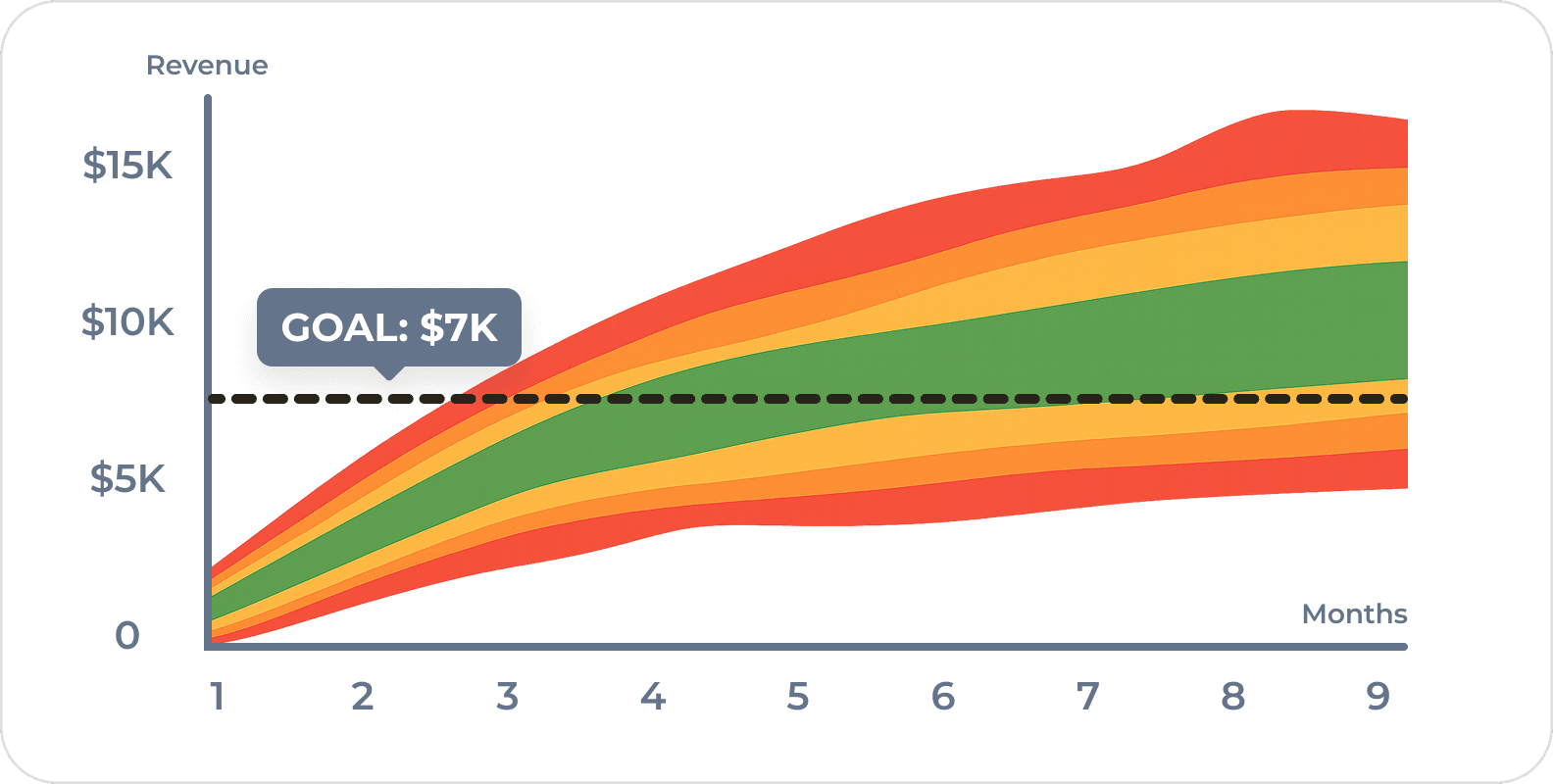

Experiment velocity is important. Moving fast matters. But velocity isn’t the goal. We don’t just need speed. We need acceleration.

Learning compounds to create acceleration.

What Toyota and Amazon Figured Out

The companies that have built durable competitive advantages aren’t necessarily the ones with the best ideas. They’re the ones with the best institutional memory systems.

Toyota’s production system is legendary, but its knowledge management practices are underrated. The concept of yokoten, horizontal knowledge transfer across teams and factories, was explicitly designed to solve the same problem. When one plant improved a process through kaizen, the question wasn’t just “did it work?” but “how do we make sure every plant learns what plant three learned?” Toyota’s answer was systematic: document the experiment, transfer the learning, update the standard.

The irony of continuous improvement is that it makes recording changes hard… processes are always evolving. Toyota University was partly the answer to that: formalizing tacit knowledge into retrievable institutional capital.

Amazon built a different version of the same thing. Jeff Bezos has described his view clearly: “Our success at Amazon is a function of how many experiments we do per year, per month, per week, per day.”

But running thousands of experiments per year only compounds if you can hold all that information in your head and use it. That’s why Amazon built Weblab, an internal infrastructure specifically designed to reduce the cost of experiments and make results retrievable across teams. AWS, Prime, and Echo weren’t just getting lucky. They were the payoffs from a system designed to run thousands of bets, cheaply, and remember what each one found.

Netflix publishes extensively about its experimentation platform for the same reason. Their data scientists don’t just analyze individual test results, they look for patterns across tests to build domain expertise over time.

The common thread across all of these organizations is that they built infrastructure to make institutional knowledge retrievable, not just collectable. These systems solved the retrieval problem manually. RAG lets us automate parts of it.

Why Experiments Are the Highest-Value Knowledge Asset

Traditional search fails for experiment archives because it depends on exact keywords. The questions teams ask rarely match the words used in the original report. An experiment titled “price sensitivity test on onboarding tier” won’t appear when someone searches “did we test charging $50 for this?” even though it’s exactly the relevant experiment.

RAG models use embedding-based retrieval by representing documents and queries as vectors that capture semantic meaning rather than literal words. Instead of matching text strings, the system retrieves experiments that are conceptually similar to the question being asked. That makes it possible for agents (or humans) to ask natural questions and retrieve the most relevant prior experiments.

But not all institutional knowledge is equally useful in a RAG system.

Strategy documents go stale. Meeting notes are context-dependent. Slack threads are unsearchable noise. But experiment records have a property that most institutional knowledge lacks: they’re structured around questions and answers. That makes them uniquely retrievable and reusable.

An experiment record answers at least three things a new team (or agent) will always want to know: what did we believe, what did we find, and what did we decide to do about it. If the record also captures the fail condition, the threshold that would have changed the decision, it becomes an even more powerful retrieval target. “Did we already test whether customers would pay $50 for this?” is a question a RAG can answer in seconds. “What was the general mood around pricing last year?” is not.

The problem isn’t that organizations don’t run experiments. It’s that they don’t record them in a way that makes them retrievable. Results live in scattered slide decks. Hypotheses live in someone’s head. Fail conditions, the most diagnostic piece of information, are almost never written down at all.

What to Put in Your Experiment Knowledge Base

The good news is that we don’t need to reconstruct our entire experiment history from scratch. We just need a format going forward that captures a meaningful signal.

At a minimum, we should have a simple experiment template:

- Question — the question we need to answer with our experiment or research

- Data - what data we will collect to answer that question or (in)validate that hypothesis

- Plan — what we actually did (the experiment type, approach, methodology)

- Results & Insights — what we found, what we actually decided, and why

Better still, use the more detailed Learn S.M.A.R.T. Experiment Template which includes:

- Hypothesis — the specific, falsifiable hypothesis if there is one

- Fail condition — the threshold that would have changed the decision

- Time box - the timing of the experiment

- Early Stop Condition - the fail fast criteria that tells you if the experiment is broken

- Next Steps - the link to the next experiment run

Tied together, the Question, Insights, and Next Steps make for a natural knowledge graph.

Don’t have a structured archive yet? Staring at a mess of old presentations? Feed the whole mass of loose presentations into an LLM and tell it to help you structure the data according to that template for indexing.

Prioritize experiments from the last two to three years, focus on anything that resulted in a major pivot-or-persevere decision, and don’t try to retroactively reconstruct everything at once. A partial RAG over 50 well-formatted experiments is more useful than a complete RAG over 500 inconsistently formatted records with biased conclusions.

How to Build It Without an Engineering Team

The technical barrier to building an experiment RAG has dropped to near zero. Off-the-shelf tools now handle the hard parts.

The simplest path:

- Structure the archive. Export or consolidate experiment records into a consistent format using the template fields above. CSV or Markdown both work.

- Upload the archive. Use a tool that supports semantic retrieval. Google NotebookLM, Claude Projects, and custom GPTs all support document-based retrieval without any setup beyond the upload. You can even ask Codex or Claude Code to build you a custom-built system that integrates directly with Asana, Notion, or Jira if that’s where you’re keeping your data.

- Train your team. Every member of the team needs to get used to writing their insights down and using agents with RAG to double check their work. If not, the RAG is just another useless artifact that no one ever reads.

- Add hooks. Better than relying on your team, hook your model directly into existing organizational processes. If your team has to submit a PRD into Jira to get it built, have your RAG agent automatically review and comment on every card submitted before engineering works on it.

A working RAG can be set up in a day.

To get the full benefit may take a bit longer as people adapt. Clever change management by inserting agents into existing workflows rather than moving everyone’s cheese will help.

The ongoing cost is maintaining the discipline to add new experiments to the archive in a consistent format… which can of course be AI assisted.

RAG is just a first step of a learning loop for agents where they will write their own experiments, analyze results, run a retrospective, and propose next steps.

Compounding Interest

The immediate payoffs are tactical: faster experiment design, fewer repeated mistakes, better onboarding for new team members who can query the archive instead of interviewing people who may have left.

But the compounding effect is strategic.

The organizations that will outperform in the next five years aren’t the ones running the most experiments. They’re the ones whose experiments compound… where the hundredth experiment benefits from the learning of the ninety-nine before it. Human teams could never quite achieve this at scale, but agents can.

Product and innovation teams need to stop thinking about individual features and think about building systems of compounding learning and insight. A RAG experiment database isn’t a knowledge management system, it’s a competitive advantage.

The question I’d love your answer to:

Have you built any kind of retrievable experiment archive for your product team — even a simple spreadsheet? If not, what’s the biggest barrier?

Comment below and tell me — I read every response.

Frequently Asked Questions

What is a RAG system and how does it help innovation teams?

RAG stands for retrieval-augmented generation. Instead of relying on an AI’s pre-trained knowledge, a RAG system retrieves specific documents or records at query time and uses them to generate answers. For innovation teams, this means an AI assistant can search your actual experiment archive — finding past hypotheses, results, and decisions — rather than generating generic responses. It turns your experiment history into a searchable, queryable institutional memory.

What should an experiment entry include in a knowledge base?

At minimum, capture the question, the data collected, the plan, and the results and insights. Kromatic’s Learn S.I.M.P.L.E. Experiment Template is the simpler of two templates and covers these four core fields — it’s a good starting point for teams new to structured experimentation. For teams ready to go deeper, the Learn S.M.A.R.T. Experiment Template is the more comprehensive version, adding a falsifiable hypothesis, fail condition, time box, early stop condition, and next steps — which makes retrieval significantly more precise.

What tools can non-technical teams use to build a RAG over their experiments?

The simplest options require no engineering setup: Google NotebookLM, Claude Projects, and custom GPTs all support document upload and retrieval. For teams that want more control, Notion AI or a basic vector database (Pinecone, Chroma) offer more flexibility. The bottleneck isn’t the tooling — it’s having a consistent experiment format to upload. Start there, and a working RAG can be running in a day.

How does experiment memory connect to AI agent performance?

AI agents have no memory between sessions. Every new conversation starts with a blank slate, so without external memory, agents repeat the same research, make the same errors, and answer the same questions repeatedly across your organization. A RAG over your experiment archive gives agents access to your team’s validated learning — turning a stateless tool into one that benefits from organizational history. This is the same problem Toyota and Amazon solved for human teams, now applied to agents.

How does an experiment knowledge base connect to innovation accounting?

Innovation accounting measures learning velocity as a core innovation metric. An experiment knowledge base makes that learning queryable and compounding — we can track not just how many experiments ran, but which hypotheses are validated, which market assumptions are disproven, and how often past learnings are referenced in new work. It turns a metric into a strategic asset visible to leadership.

If you’re navigating the challenge of making your innovation program’s learning actually compound — across teams, across AI tools, and across budget cycles — executive coaching might be the right conversation. We work with heads of innovation and product leaders at the VP and director level.

Comments

Loading comments…

Leave a comment