Can Synthetic Personas Replace Customer Discovery? An Honest Look

They'll agree with you faster than any human — and that's the problem.

Quick Answer: Synthetic personas (LLMs prompted to respond like your target customers) can pressure-test a value proposition or kill a weak idea in minutes. But peer-reviewed research shows they systematically compress the diversity you’d expect from real people and can’t surface what you don’t already know. They replicate about 76 percent of known experimental effects, and accuracy jumps dramatically when grounded in real interview data rather than fictional backstories. Use synthetic interviews to get sharper before real interviews, not instead of them.

The Promise (and the Temptation)

Synthetic personas are a dream come true for some: take an LLM, train it to respond like your actual customers, and use it to validate your brilliant business idea.

You can use them as interview subjects, throw them at a landing page, or even run usability tests with them. Synthetic personas are cheaper and faster to recruit than real people. Plus…

…you don’t need to talk to (potentially scary) real people.

The temptation to talk to a computer over a human is huge, especially for those of us introverts who would rather stay home and watch Star Trek reruns than cold call someone. But the temptation is dangerous.

I’ve personally tried them and found them to be both useful and horrifying. They’ve even given me feedback on this post! (Apparently I make too many asides, but what do they know.)

Despite strong sales pitches from companies offering synthetic personas as a service (PaaS?), there are legitimate concerns about how valid they are as tools for idea validation. So here’s the data (and some rules of thumb for how we can use synthetic personas effectively in our research today).

What the Research Actually Says

The pros and cons for synthetic personas are (predictably) more nuanced than either the hype cycle or the UX skeptics would suggest. Here’s what peer-reviewed and independent research actually says so far.

Where they work. A 2024 study by Yeykelis, Cummings et al. replicated 133 published experimental findings from the Journal of Marketing using LLM-powered personas. The result: 76 percent of main effects reproduced successfully… better than psychology’s historical replication baselines of 36–47 percent, though marketing experiments may have different benchmarks. Separately, Nielsen Norman Group reviewed three studies comparing “digital twins” (LLM simulations grounded in actual interview transcripts) to synthetic users built from demographics alone. When fed real interview data, digital twins could backfill missing responses with near-perfect accuracy (r = 0.98 in the underlying Kim and Lee study). For new questions the correlation was lower, but still meaningfully better than demographic-only approaches. Even heavily trimmed transcripts retained accuracy scores of 0.79–0.83. The richer the real data, the better the simulation.

Where they fail. Across multiple studies, LLM-simulated respondents are systematically more positive, more progressive, and less varied than real humans. A NeurIPS 2025 study by Li, Chen, Namkoong, and Peng (out of Columbia Business School) tested three types of LLM-generated personas on the 2024 U.S. presidential election. Minimal, census-style personas performed reasonably well… but richly detailed, LLM-generated personas predicted Democratic victories across all states, wildly diverging from reality. The instinct to make a persona “more realistic” by adding backstory and personality actually degrades accuracy.

The fundamental limit is that LLMs simulate from their training data. They can tell us what our customer segment might say. They cannot surface the surprising, contradictory, or context-specific insight that a real person shares when we ask an open-ended question in a real conversation with real world context. Synthetic personas are good at known unknowns (pressure-testing the assumptions we already have). They are largely incapable of revealing unknown unknowns (the insights we didn’t know to look for).

What we don’t know yet. Most of the published research focuses on consumer (B2C) contexts, where LLM training data covers common demographics reasonably well. For B2B contexts (where many innovation teams actually work), the picture is murkier.

Niche roles, specialized pain points, and proprietary industry context are not well represented on the public intertubes. Moreover, complex sales like B2B or B2G involve the interaction of several different personas in a dynamic environment where individual personalities have to deal with politics and procedure.

NN/g’s research explicitly cautions against using synthetic users for niche or specialized populations, noting that for specialized segments the underlying data is “patchy and inaccurate.” Early B2B-specific testing suggests a stronger positive bias and a “herd mentality” in synthetic responses compared to real B2B buyers who…well…idiosyncratic would be the kind way of putting it.

Similarly, we’d expect narrow customer segments to perform worse than broad ones, simply because LLMs have less data to draw on for highly specific roles or industries. But there’s limited published research directly comparing narrow versus broad segment accuracy in a controlled way.

This is an important gap, especially for innovation teams or startups targeting niche enterprise verticals for early adopters. If our customer segment is “senior procurement leaders at mid-size pharmaceutical companies,” we should treat synthetic persona output with more skepticism than if we’re targeting a broad consumer audience.

As with all innovations, we need to ground ourselves in real data as early as possible.

How to Use Synthetic Personas Effectively Right Now

The research gives us some guidelines… Synthetic personas are NOT a replacement for customer discovery. But, they can speed us up at specific points in the validation process.

Stage 1 — Quick idea (in)validation. Start with a minimal persona: job title, industry, one or two key pain points. Use it to pressure-test a concept, generate likely objections, and (critically) figure out the right questions to ask real people. The goal here isn’t to validate the idea. It’s to kill obviously weak ideas and sharpen our interview guide before we spend anyone’s time. Census-style attributes outperform rich fictional backstories here, so keep the persona description lean.

Stage 2 — Real customer interviews. There is no substitute for getting out of the building. Talk to real people! Be human before the robots start doing it for us. Synthetic personas can help us arrive with better hypotheses, sharper questions, and a clearer sense of what we’re trying to learn, but the real conversation is where discovery happens.

Stage 3 — Build a grounded persona. After real interviews, feed the actual transcripts (or even solid summaries) back into the LLM. Now we have a synthetic persona anchored in real data, not guesswork. That’s gold.

Stage 4 — Synthetic stress-testing at scale. With a grounded persona, we can pressure-test variations of our value proposition, generate edge-case objections, and simulate responses to positioning changes… all without needing to recruit again. We can generate and narrow down a hundred different hypotheses and rapidly get to the few worth testing against real people.

Use this stage to extend real research, not replace it.

Tactical tips for better results:

- Increase the temperature setting to widen response variance (more variance doesn’t mean more accuracy, but a synthetic persona that agrees with everything is useless).

- Run multiple simulations of the same question and look for disagreement between runs; that’s where the interesting signal lives.

- Avoid rich backstories and personality quirks in the persona prompt… the research consistently shows that less fictional detail produces more accurate responses.

- And always use synthetic personas to generate objections and stress-test, never to seek validation.

If we’re using AI to confirm what we already believe, we’re just putting extra effort into normal human confirmation bias.

My Personal Experience

I personally use synthetic personas based on real research and real experience. Most of the time, they tell me what I already know.

It’s the times when they tell me something counterintuitive that’s worth digging in.

Sometimes they’re way off base, but they make me think twice and question my assumptions.

I use them regularly to critique my blog posts, help me select articles for the newsletter, and smoke test a value proposition.

The ROI for me is very clear:

- I can get feedback that I otherwise wouldn’t have the time or money to gather.

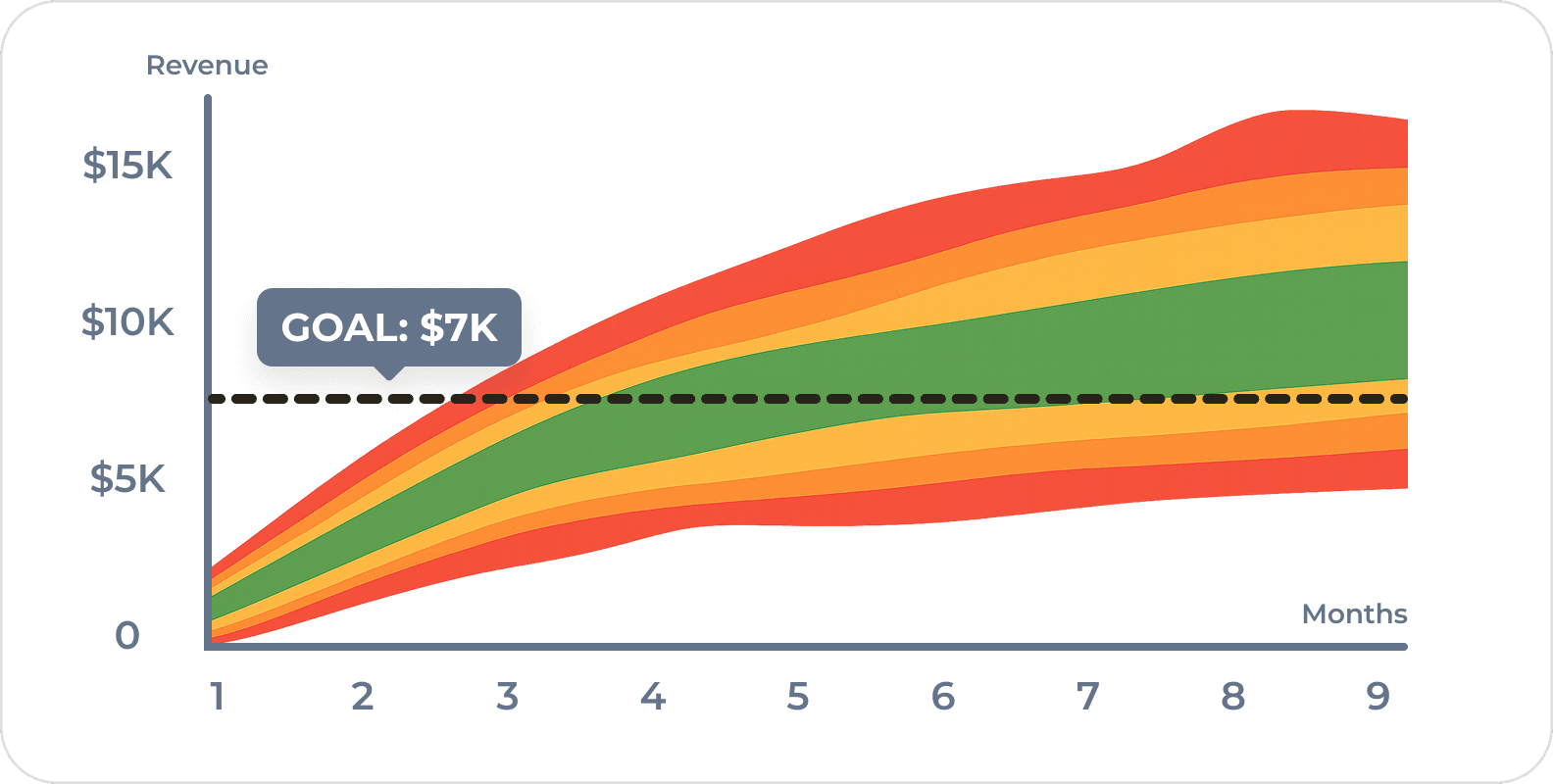

- I can compress a 6 week interview cycle into 2 weeks.

Instead of spending my first several interviews in an open-ended interview figuring out what questions to ask, I can do 80% of that with synthetic personas. I still leave myself room in real interviews for tangents and serendipity, but I lock down the interview guide far earlier than I otherwise would.

I can also test my recruiting script before sending out the first email. That usually saves me at least two iterations of emails and my addressbook from overuse.

For a small innovation team, the ROI is obvious. For larger teams more so. NOT having to get legal clearance or permission from the sales team to get your first (synthetic) interviews can be a massive accelerant.

They’ll Get Better (and Soon)

The limitations are real… but the field is moving fast.

Big data makes a big difference. Large organizations have large data sets of proprietary customer research. Fine-tuning models on that data should significantly reduce the positivity bias and variance compression we see today.

And LLMs are improving… fast.

While I am personally skeptical that LLMs are capable of getting us all the way to General Artificial Intelligence, they’re very impressive and becoming more so every day. In 12 or even six months, synthetic interviews might start replacing real ones.

But… today’s limits are real, and making decisions based on where we hope the technology will be (rather than where it is) is exactly the kind of untested assumption that can kill a business before it gets started.

So, have you tried synthetic personas in your own research? Did they surface anything you didn’t already suspect? And for those working in B2B or niche markets… did the results feel right, or did the AI version of your customer feel suspiciously agreeable? We’d love to hear what you’re seeing.

Frequently Asked Questions

Can synthetic personas replace customer interviews?

No. They can replicate effects we already know about, but they can’t tell us what we don’t know. LLMs simulate from training data, so the output reflects existing patterns. The surprise insight that changes our entire approach? That comes from a real person saying something we never would have thought to ask about. Use synthetic personas to prepare for interviews and extend findings afterward. Not as a substitute for talking to humans.

What data should I feed a synthetic persona?

Start with the basics: job title, industry, one or two pain points. That’s enough for Stage 1 (killing bad ideas and sharpening questions). But if we want real accuracy, we need to ground the persona in actual interview transcripts or detailed summaries. Even heavily trimmed transcripts retain accuracy scores above 0.79 in the NN/g research. The more real customer data we put in, the less the LLM has to guess.

How do I know if the results are any good?

Run the same question multiple times. If every run gives nearly identical answers, something is wrong (temperature too low, persona too constrained, or both). We want disagreement between runs… that’s where the interesting signal lives. And if synthetic responses diverge substantially from what real customers have actually told us, trust the humans. Always.

Do synthetic personas work for B2B?

With a lot more caution. Most of the published research tested synthetic personas in B2C contexts, where LLM training data is richer. B2B is harder: niche roles, proprietary context, and the messy politics of multi-stakeholder buying decisions are not well represented in training corpora. NN/g explicitly warns against synthetic users for specialized populations. Our advice: if we’re doing B2B research, get to real interview data faster and don’t trust the synthetic output until we’ve grounded it.

Comments

Loading comments…

Leave a comment