Quantitative Experiment Design: A Cheat Sheet for Product Teams

Because objective-sounding numbers can still point you off a cliff.

Quick Answer: When designing quantitative experiments, we should always try to invalidate our hypothesis rather than confirm it, choose actionable metrics (with a ”%” or “per” in them), agree on confidence levels before running the test, and pick the minimum sample size needed to potentially change our minds. As product managers, we need to treat each experiment like a minimum viable test — gathering just enough data to tell us if we’re wrong — while watching for common errors like non-random samples, confirmation bias, and confusing correlation with causation.

A lot of innovation work involves small sample sizes and relies on qualitative data. Design thinking, agile, customer discovery, and lean startup all emphasize the need for starting small and building up. But eventually, we have to start running quantitative experiments, which requires a focus on quantitative experiment design. This means we’d better design experiments that point us in the right direction. If we run flawed quantitative experiments, all those objective-sounding numbers might convince us to take the wrong path — away from our customers. Watching or running countless quantitative experiments over the years, we’ve seen a lot of errors that come up time and time again. So we created a basic cheat sheet to supplement our online interactive workshop on statistics.  This is just a list of tips to help remember when to apply those lessons. While this post won’t teach you stats, we hope it will serve as a reminder for some key lessons and avoid some critical mistakes. Because we like to open up our work by using creative commons licensing when we can, we’re sharing our Rules of Thumb here along with some brief descriptions of each rule. Feel free to share it with your colleagues, or even print it out and post it for quick reference!

This is just a list of tips to help remember when to apply those lessons. While this post won’t teach you stats, we hope it will serve as a reminder for some key lessons and avoid some critical mistakes. Because we like to open up our work by using creative commons licensing when we can, we’re sharing our Rules of Thumb here along with some brief descriptions of each rule. Feel free to share it with your colleagues, or even print it out and post it for quick reference!

1. Create a Great Test Plan

Focus on the Goal

Always try to invalidate your hypothesis. We don’t need a perfect experiment with 99.99967% confidence. We need just enough data to tell us if we’re doing something stupid. We should think of each quantitative experiment like a minimum viable test and make sure we’re only gathering data that would tell us if we’re wrong.

Choose the Metric

When we choose a metric, we should try thinking through the possible results. If every possible outcome makes us feel good, it’s probably a vanity metric. Try a new one. Similarly, if each possible result could be interpreted in such a way as to either validate or invalidate a prediction, be careful! It’s also probably a vanity metric. Actionable metrics should generally have a % or a “per“ in them. They should be something divided by something else. E.g., “Signups per visitor” or “average basket size per customer.” Get a second set of eyes and talk through it if you’re not sure if your metric is actionable.

Set the Confidence Level

Agree on the confidence level before the test. Not every decision needs to be made with 95% confidence. We’re entrepreneurs! If we had perfect information, everyone would be doing it. But if you’re not sure, stick with 95% as the default.

Estimate the Population

When trying to calculate the required sample size, don’t worry about getting the population size exactly right if it’s pretty large, e.g., 100,000 people. When the population is very large, the exact number doesn’t actually impact the sample size or margin of error.

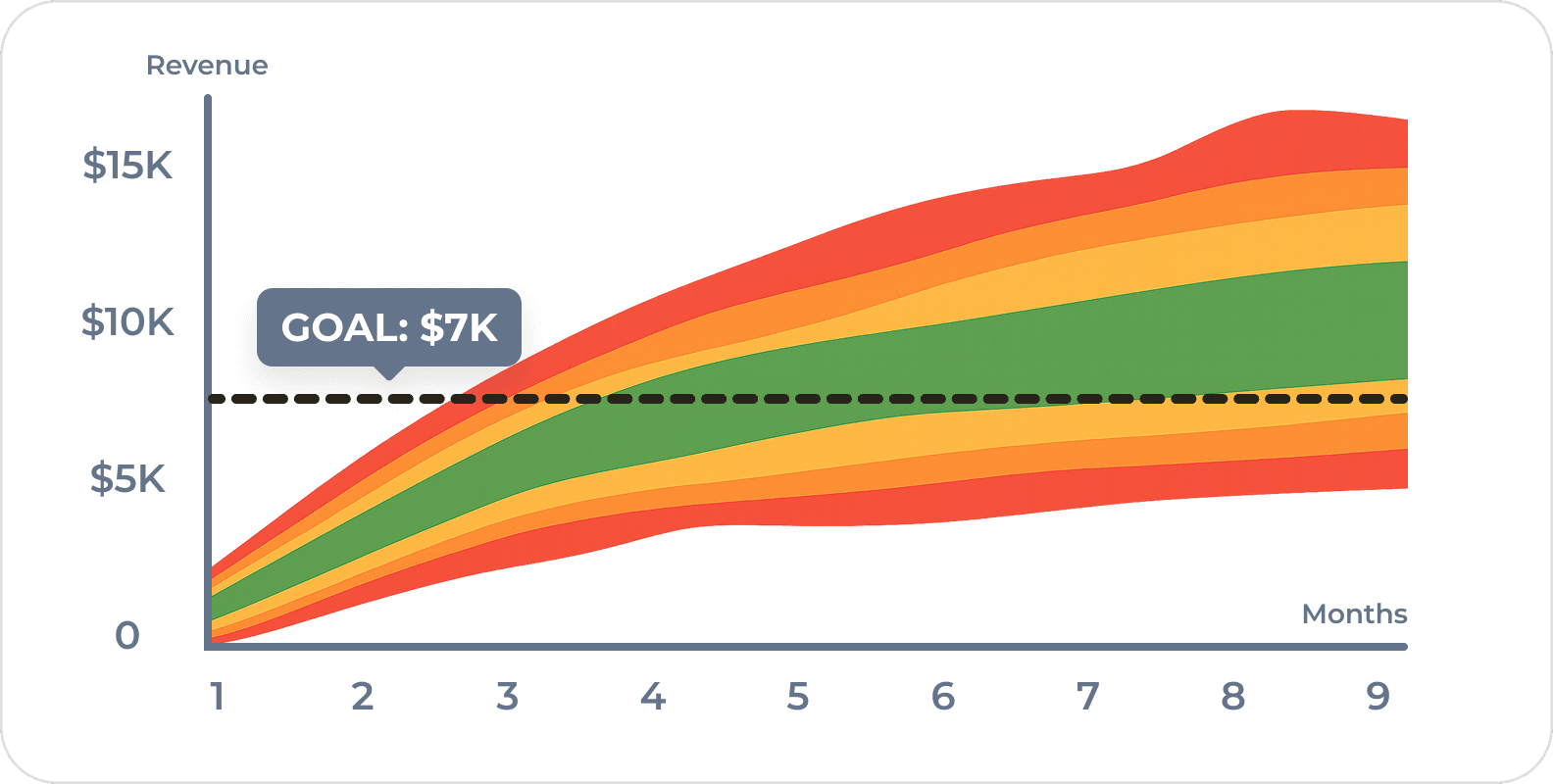

Decide on Sample Size

The more data we get, the more certainty. But gathering data has increasing costs and diminishing returns. So pick the minimum sample size required for a margin of error that would potentially change your mind. (See Focus on the Goal) When generating ideas during customer discoverer or usability testing, Sample Size should not be thought of in the same way as above. A sample of 5 is good enough.

Choose the Sample

Make absolutely sure our sample is randomly chosen. If we’re testing on a Tuesday, is Tuesday the same as Saturday? If the sample isn’t random, we could wind up with false positives or false negatives. Check for seasonality, related experiments that might skew our data, or other weirdness.

2. Debrief and Calculate Results

Calculate Margin of Error

Remember to check the confidence level. Don’t calculate margin of error with a different level than you agreed to when designing the experiment. Calculate the margin of error after the experiment, not before. It changes depending on the actual result. (Try it using our experiment calculator. If you’re using a margin of error calculator that isn’t adjusting based on your result, it’s dead wrong.) And if running an A/B test, make sure you see margin of error on both sides of an A/B test. Special tip for investors and business managers: if a team is showing quantitative experiment results without a sample size or margin of error, you cannot judge the data. Insist that the team show the real data.

Remember the Rule of Five

If trying to get a quick estimate, remember the Rule of Five. The Median is 93.75% likely to be in the range of a random sample of 5.

3. Synthesize Insights

Focus on the Goal

Focus on the Goal

I cannot repeat this enough. You cannot conclusively prove anything, only fail to disprove it. Always try to invalidate your hypothesis.

Check for Common Errors

First let’s review the key points from above.

- Remember to check the confidence level.

- Check that the sample is truly random.

- Consider any factors that might produce a false positive or false negative.

- Look for alternative explanations or changes.

But here’s a few more:

- Check for potential bias from the people running the experiment, including yourself. Be wary of teams and colleagues with a confirmation bias. They’ll report what they want to see.

- Check if observation changed the result. Sometimes our test participants can see us looking and will change their behavior.

- The odds don’t change. A certain result is not “due.” Don’t fall into the Gambler’s Fallacy. If you flip a coin five times in a row, the next one isn’t any more likely to be tails.

- Don’t be surprised by unusually high or low numbers. Statistics is weird. Sometimes you flip heads twenty times in a row. It doesn’t mean the coin is rigged. Try again and slowly increase the sample size.

- Don’t assume a large group will behave the same as the smaller one. If you test with early adopters, don’t be surprised when the majority of people behave differently. The populations are different.

- Don’t look at tests in isolation — consider all known facts together. Don’t ignore your previous knowledge just because an A/B test tells you something. Sometimes your previous knowledge plays a key role.

- Correlation does not equal causation. This is an oldie but a goodie. Ice cream sales and shark attacks go up in the summer, but shark attacks don’t make people want ice cream and ice cream doesn’t cause shark attacks. Don’t jump to conclusions.

Lastly, think about Next Steps.  Don’t go all in with every new move. When deciding on next steps, always increase the sample size slowly. There are many, many things that can go wrong when running quantitative tests, so go slowly and make sure that your “success” isn’t just a statistical fluke.

Don’t go all in with every new move. When deciding on next steps, always increase the sample size slowly. There are many, many things that can go wrong when running quantitative tests, so go slowly and make sure that your “success” isn’t just a statistical fluke.

Lessons Learned

- There are no certainties in statistics.

- Statistics is hard. Get help.

- When in doubt, increase the sample size slowly…

Frequently Asked Questions

How do I design a good quantitative experiment for lean startup?

Start by focusing on the goal: always try to invalidate your hypothesis rather than confirm it. Choose an actionable metric (something with a ”%” or “per” in it), agree on a confidence level before running the test, and decide on the minimum sample size needed to potentially change your mind. As product managers, we should treat each quantitative experiment like a minimum viable test.

What sample size do I need for a quantitative experiment?

Pick the minimum sample size required to achieve a margin of error that would potentially change your mind. More data gives more certainty, but with increasing costs and diminishing returns. For very large populations (e.g., 100,000+), the exact population size doesn’t actually impact the required sample size. For qualitative work like usability testing, a sample of 5 is good enough.

How do I know if I’m using a vanity metric?

Think through every possible result of your experiment. If every outcome makes you feel good, it’s probably a vanity metric. Similarly, if any result could be interpreted as either validating or invalidating your prediction, that’s a warning sign. Actionable metrics should be something divided by something else — like “signups per visitor” or “average basket size per customer.” Get a second set of eyes if you’re unsure.

What are the most common mistakes in quantitative experiment design?

Common errors include not randomizing your sample (e.g., testing only on Tuesdays), calculating margin of error at a different confidence level than originally agreed upon, ignoring confirmation bias in the team reporting results, assuming early adopter behavior will match the mainstream population, and confusing correlation with causation. As product managers, we also need to avoid going all-in based on a single test — always increase sample size slowly.

Should I always use 95% confidence in experiments?

Not necessarily. We’re entrepreneurs — not every decision needs 95% confidence. The key is to agree on the confidence level before the test runs, not after. If you’re unsure what level to use, 95% is a solid default. But sometimes a lower confidence level is perfectly appropriate when we just need enough data to tell us if we’re doing something fundamentally wrong.

Comments

Loading comments…

Leave a comment