Guesstimation: 5 Techniques to Estimate When You Have No Data

Because "I believe in it" isn't a financial model.

Quick Answer: Guesstimation is the skill of turning limited information into useful quantitative estimates — critical in innovation where hard data rarely exists. As product managers, we can dramatically improve accuracy by combining five techniques: leveraging the wisdom of the crowd, estimating in ranges instead of single values, using Fermi Decomposition to break complex problems into smaller pieces, accounting for known biases like compounding effects, and calibrating our confidence with equivalence bets. Like any skill, guesstimation improves with deliberate practice.

By Tristan Kromer In innovation, everything is a guess. Innovation can almost be defined by a lack of information. We often have no idea how many customers will walk through our door, what our price point will be, how much waste the manufacturing process will create, what the market size is, or the eventual outcome of a project. So we use “guesstimation” to turn the small bits of information we DO have into a plan.  As entrepreneurs, we get comfortable making big, bold decisions from hunches based on subjective information. We might invest months or years of our time just because we think there might be a market. We might invest millions in a project just because we believe in it. That’s inspiring, but it’s not a recipe for a successful business model. To make good decisions, we need solid, quantitative data. We shouldn’t invest a definite number of dollars for a completely unknown return. At the very least, we need to weigh the odds of ever getting our money back.

As entrepreneurs, we get comfortable making big, bold decisions from hunches based on subjective information. We might invest months or years of our time just because we think there might be a market. We might invest millions in a project just because we believe in it. That’s inspiring, but it’s not a recipe for a successful business model. To make good decisions, we need solid, quantitative data. We shouldn’t invest a definite number of dollars for a completely unknown return. At the very least, we need to weigh the odds of ever getting our money back.

How We Got Here

Alexander Osterwalder rightly pointed out that 60-page business plans full of excel models were often a waste of time because everything in them was pure guesswork. The countless hours spent drafting compelling executive summaries and generating models would be better spent going out and testing ideas. So Osterwalder created the Business Model Canvas, a lightweight business model sketchbook used to identify hypotheses and then rapidly modify them based on data.  This was an overwhelmingly positive development, helping the startup and venture capital community move beyond the cumbersome conjecture of the business plan. Methodologies like lean startup, design thinking, and business model generation helped spur faster iteration and the qualitative data needed to make rapid decisions. But we still need numbers. We want to know how much impact we’re going to have. We want to know our margins. We want to know how much CO2 our manufacturing process is going to put into the air. Customer discovery interviews alone are not going to answer those questions. If anything, the innovation community has swung too far in the direction of qualitative insight. We need to strike a balance between qualitative and quantitative data, and to make them work together. When we need numbers, qualitative insights can enhance and inform quantitative models. This is the art – and the science – of guesstimation.

This was an overwhelmingly positive development, helping the startup and venture capital community move beyond the cumbersome conjecture of the business plan. Methodologies like lean startup, design thinking, and business model generation helped spur faster iteration and the qualitative data needed to make rapid decisions. But we still need numbers. We want to know how much impact we’re going to have. We want to know our margins. We want to know how much CO2 our manufacturing process is going to put into the air. Customer discovery interviews alone are not going to answer those questions. If anything, the innovation community has swung too far in the direction of qualitative insight. We need to strike a balance between qualitative and quantitative data, and to make them work together. When we need numbers, qualitative insights can enhance and inform quantitative models. This is the art – and the science – of guesstimation.

Improving Your Guesstimation

As Osterwalder pointed out, any numbers we feed into a quantitative model for innovation are guesses. But there are good guesses and bad guesses, and some people are a lot better at guessing than others. Try these methods to improve the accuracy of your guesstimations, even in extremely uncertain situations.

Tap the wisdom of the crowd

Individuals are terrible at estimating things, but groups of individuals are pretty good at it. If one person tries to guess the number of pennies in a jar, they will likely miss the mark by a greater margin than the average of a group’s guesses. The “wisdom of the crowd” is built on the canceling of biases across a large number of people. Different estimation methods and different amounts of experience and knowledge are combining to create an average that is better than any individual guess. This won’t eliminate any systemic biases, but it’s better than one person’s guess.

How you do it

- Assemble a group of people.

- Have them each create an estimate independently.

- Average the estimates.

Guesstimation tips

- Make sure it’s a diverse group. A group of people with similar backgrounds are likely to hold the same biases.

- Make sure your estimators aren’t suffering from undue influence (eg., the boss has already expressed a strong opinion). The value of this method lies in honest disagreement.

Estimate in ranges

Typical estimates are single values:

- 10 tons of CO2 per month

- $27 average basket size

- 15% conversion rate

But single-value estimates fail to express the level of uncertainty from the estimator. If an innovator named Alice estimates that their new invention will remove 10 tons of CO2 from the air per month and another innovator named Bob estimates that their invention will remove 20 tons, should I invest in Bob or Alice? If Alice has a decade of experience in carbon capture and has previously won the Nobel prize, while Bob just graduated high school, shouldn’t I factor that in? The raw numbers of 10 and 20 don’t express this difference in Alice and Bob’s knowledge and confidence. A better approach is to estimate with ranges. In this approach, we allow everyone to express a range of possible outcomes. Alice might estimate 8 - 12 tons of CO2 per month while Bob might estimate 1 - 50 tons of CO2 per month. Bob’s much wider range of 1 to 50 expresses that he is wildly optimistic, but he is aware that his invention might do very little. Alice’s narrower range of 8 to 12 reflects Alice’s greater degree of confidence. Expressing estimates as a range instead of a single value allows the estimators to express their level of confidence and can help decision-makers understand how much information is available. Expressing the level of uncertainty in a guesstimate also allows us to show progress as we run experiments and generate more information. We might start with an estimated conversion rate of 10%-50%, then run a few tests, and then narrow this range to 25%-35%. The average of both guesses is the same, but we are much more confident now. (Note: Douglas Hubbard sometimes calls this a confidence interval, but some statisticians complain about that term as it means something else in statistics. We call it an estimation interval or ranged estimate.)

How you do it

- Estimate the value.

- Estimate the maximum possible value.

- Estimate the minimum possible value.

- Experiment.

- Tighten your range.

Guesstimation tips

- Estimate a single value first to provide an anchor point.

- The minimum should usually be zero for early stage projects.

- Framing matters. If a venture capitalist asks for a ranged estimate before approving funding, the entrepreneur is incentivized to narrow their ranges and inflate the estimate.

- Try the Equivalence Bet (see below) to improve the estimates and represent a 90% estimation interval.

Use Fermi Decomposition

Break it down! When faced with an impossibly complex problem, divide it into smaller, more solvable problems. If you are trying to estimate a complex variable, break it into sub-estimates. The physicist Enrico Fermi was legendary for his ability to estimate using this method, showing a surprising degree of accuracy based on limited information. He once calculated the power of an atomic blast based on how far the shock wave blew confetti he dropped from his hand. I tested this myself in a survey. I asked participants to estimate the height of the Burj Khalifa, one of the tallest buildings in Dubai. The answers were wildly inaccurate, ranging from 220 to 6,000 feet. On average, they were 62.27% off of the real height of 2,717 feet.  When I run a similar survey asking participants to estimate the number of floors, the average height per floor, and the size of any antennae on top of the building, the participants can break down the problem into more manageable, quantifiable chunks of information. The accuracy of the average estimate improves by about 5.5%. (Attendees of our Innovation Accounting Program show more improvement here, going from 32.15% to 4.4% accuracy when estimating heights of buildings.) The same approach applies to business or impact models. Don’t estimate the total profit. Estimate the margin, the number of customers, the price point, the customer retention rate, and the acquisition rate. Use easier-to-collect data wherever possible.

When I run a similar survey asking participants to estimate the number of floors, the average height per floor, and the size of any antennae on top of the building, the participants can break down the problem into more manageable, quantifiable chunks of information. The accuracy of the average estimate improves by about 5.5%. (Attendees of our Innovation Accounting Program show more improvement here, going from 32.15% to 4.4% accuracy when estimating heights of buildings.) The same approach applies to business or impact models. Don’t estimate the total profit. Estimate the margin, the number of customers, the price point, the customer retention rate, and the acquisition rate. Use easier-to-collect data wherever possible.

How you do it

- Identify the variable you want to solve for.

- Identify sub-variables that offer simpler inputs.

- Calculate the sub-variables.

- Calculate the main variable.

Guesstimation tips

- When creating a financial or impact model, base the variables on user behavior to make it easier to estimate and to test with real data.

Avoid known biases

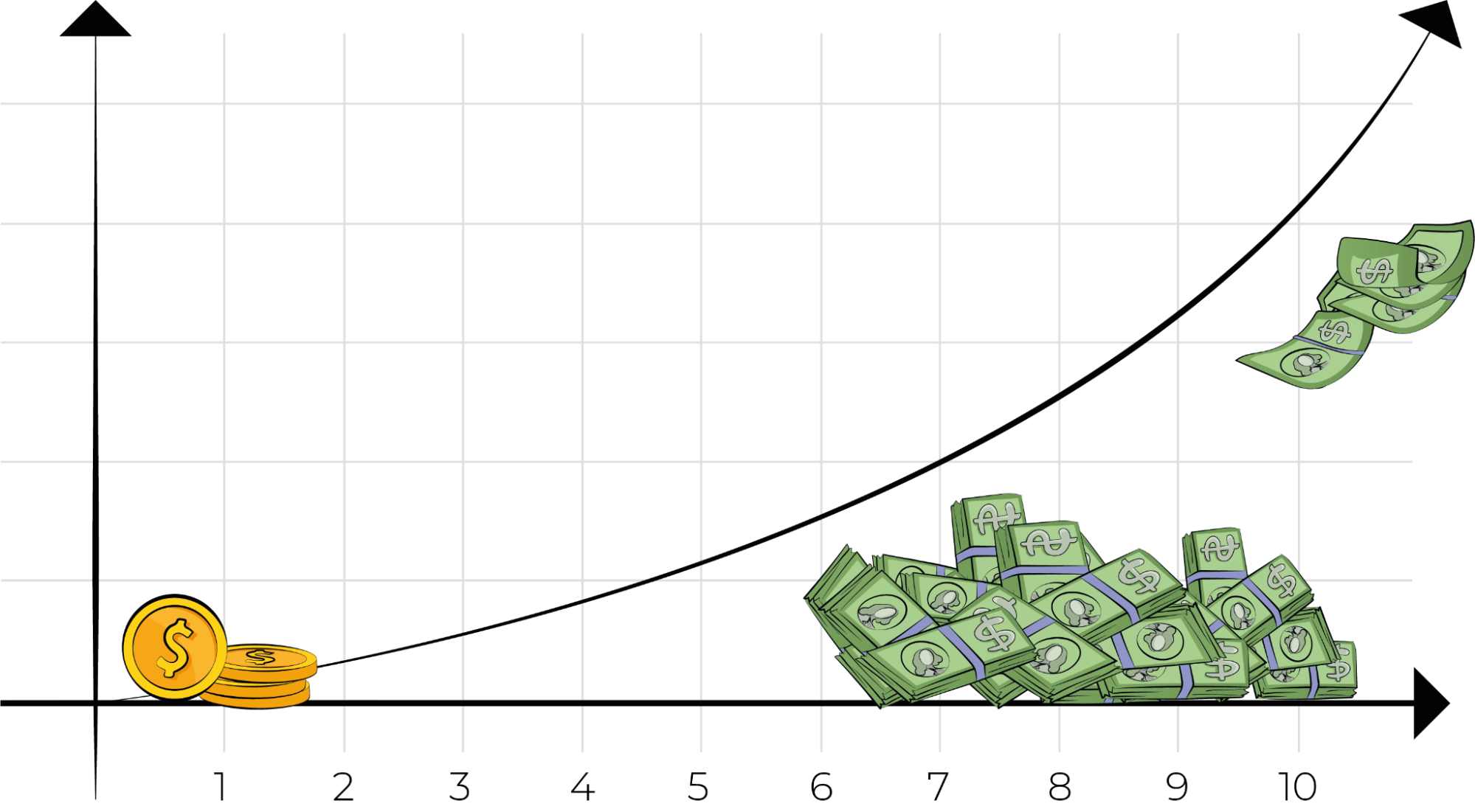

There are some situations where humans are universally terrible guessers. Sometimes there are systemic biases that are going to throw off everyone. Biases such as anchoring (the tendency to lend too much weight to one data point), framing (conclusions being affected by how the information is presented), and cultural biases (race, gender, etc.) are well-known and universally experienced – which means they can be predicted and mitigated – not solved, but at least accounted for as best we can. One common bias in business guesstimation involves compounding effects, such as the impact of interest rates. If you ask a typical person to estimate the value of a dollar compounding at 10% interest over 20 years, they tend to wildly underestimate it.  Compounding effects reveal themselves in a wide range of business predictions, including the growth of computer memory (Moore’s law), AI algorithm improvements, network effects from communities, and viral marketing campaigns. If you suspect you are estimating something with exponential effects, don’t try to directly estimate the impact! Estimate the compounding rate and do the math, which should at least get you within the proper order of magnitude.

Compounding effects reveal themselves in a wide range of business predictions, including the growth of computer memory (Moore’s law), AI algorithm improvements, network effects from communities, and viral marketing campaigns. If you suspect you are estimating something with exponential effects, don’t try to directly estimate the impact! Estimate the compounding rate and do the math, which should at least get you within the proper order of magnitude.

How you do it

- Run a Fermi Decomposition first to simplify the process.

- Review a list of common cognitive biases.

- Consider the impact of common human prejudices.

Guesstimation tips

- Check if you may have any compounding effects.

- Look for systemic biases that might be affecting everyone on your team.

Equivalence bet

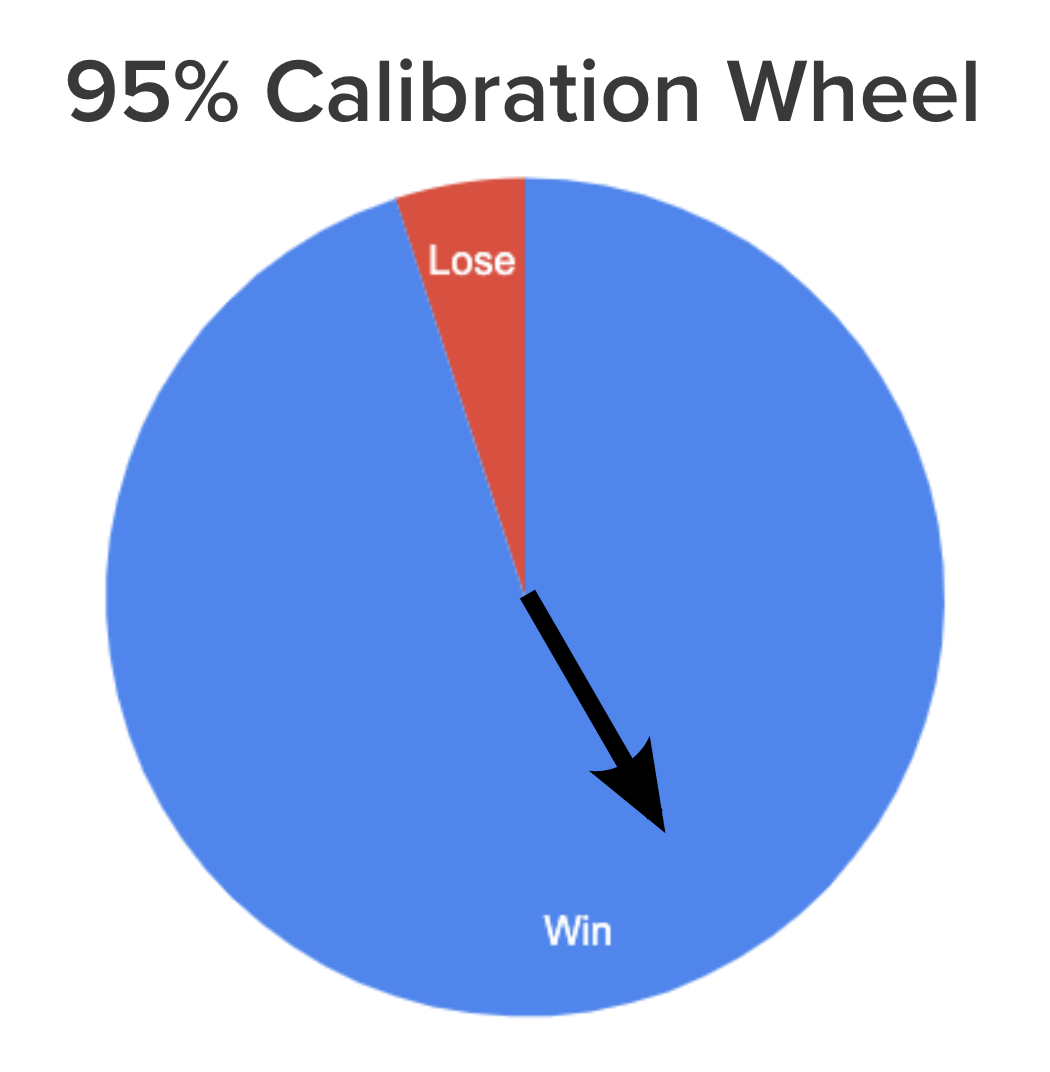

How confident are you in your guesstimation abilities? For most of us, the answer is too confident. Statistically, we are more confident in our accuracy than the evidence shows us to be. Whether we think too highly of our guessing ability or think our opinion should carry more weight than others’, it’s a basic human trait to be overconfident. 93% of Americans think they are above average drivers! We are wrong more often than we think. So how can we improve our confidence in our own confidence? This starts by expressing our estimate as a range as we discussed above and also expressing our level of confidence. For example, “I am 95% confident that the population of Brazil is above 50 million and I am 95% confident that the population is below 200 million people.” In other words, “I am 90% confident that the population of Brazil is between 50 and 200 million people.” If I am correctly assessing my knowledge, then if I make 10 such statements, I should be right 90% of the time. If our ranged estimates are only right 70% of the time, we are overconfident. If we are right 100% of the time, we are underconfident! To make sure we are expressing our confidence accurately, we can use the equivalence bet. Essentially, we can put some money on the line. We humans are very overconfident in assessing any individual risk, like our ability to handle a car in the rain, but we’re surprisingly good at comparing two risks against each other. We might be overconfident at our luck at the roulette wheel, but we know we stand a better chance at poker than betting on a single number in roulette. So we can compare our confidence in our guesstimation to our confidence in another uncertain situation such as a spinning wheel.  If we flick the spinner around the wheel, it will land in the blue area 95% of the time and we win. 5% of the time it will land in the red and we lose. Now we have something to compare to our previous statement, “I am 95% confident that the population of Brazil is below 200 million.” If you are forced to bet $20 on either the calibration wheel or that the population of Brazil is below 200 million, which bet do you prefer? If you prefer to bet your money on the calibration wheel, that means you think the wheel represents a better chance of success. Since the odds of winning with the wheel are exactly 95%, something deep in our brain is telling us that we are less than 95% confident in our estimate of Brazil’s population. Now we can adjust. Let’s increase the upper bound of our range. I know the population of the United States is 330 million, and I’m pretty sure that Brazil is a bit smaller. So, “I am 95% confident that the population of Brazil is below 330 million.” Again, we can consider whether we want to bet on the wheel or the new estimate of less than 330 million. In this case, I would prefer to bet on my estimate. Preferring my estimate means I have actually overcompensated – I am actually 100% confident that Brazil’s population is less than that of the US, so I need to adjust my estimate again. Now, “I am 95% confident that the population of Brazil is below 300 million.” Comparing my estimate a final time, I am indifferent to betting on the wheel or my estimation. Now I am accurately expressing my confidence correctly by using the calibration wheel to compare my estimate to a known risk. If I do this for both the bottom and top range of my estimate, I will be exactly 95% certain that each end of the range is accurate (5% possible error in each end means a 90% confidence in the total range). This is a difficult method that takes practice, but it’s a powerful tool, and you can improve your guesstimation abilities with just a few hours of work. If you’re betting millions of dollars on a marketing campaign or even spending a few weeks improving your nonprofit fundraising, it’s worth putting in the time to calibrate your confidence.

If we flick the spinner around the wheel, it will land in the blue area 95% of the time and we win. 5% of the time it will land in the red and we lose. Now we have something to compare to our previous statement, “I am 95% confident that the population of Brazil is below 200 million.” If you are forced to bet $20 on either the calibration wheel or that the population of Brazil is below 200 million, which bet do you prefer? If you prefer to bet your money on the calibration wheel, that means you think the wheel represents a better chance of success. Since the odds of winning with the wheel are exactly 95%, something deep in our brain is telling us that we are less than 95% confident in our estimate of Brazil’s population. Now we can adjust. Let’s increase the upper bound of our range. I know the population of the United States is 330 million, and I’m pretty sure that Brazil is a bit smaller. So, “I am 95% confident that the population of Brazil is below 330 million.” Again, we can consider whether we want to bet on the wheel or the new estimate of less than 330 million. In this case, I would prefer to bet on my estimate. Preferring my estimate means I have actually overcompensated – I am actually 100% confident that Brazil’s population is less than that of the US, so I need to adjust my estimate again. Now, “I am 95% confident that the population of Brazil is below 300 million.” Comparing my estimate a final time, I am indifferent to betting on the wheel or my estimation. Now I am accurately expressing my confidence correctly by using the calibration wheel to compare my estimate to a known risk. If I do this for both the bottom and top range of my estimate, I will be exactly 95% certain that each end of the range is accurate (5% possible error in each end means a 90% confidence in the total range). This is a difficult method that takes practice, but it’s a powerful tool, and you can improve your guesstimation abilities with just a few hours of work. If you’re betting millions of dollars on a marketing campaign or even spending a few weeks improving your nonprofit fundraising, it’s worth putting in the time to calibrate your confidence.

How you do it

- Create a ranged estimate.

- Evaluate the upper range.

- Compare betting real money on the 95% equivalence wheel to betting on your upper range estimate.

- If you would rather bet on the wheel, increase your upper range.

- If you would rather bet on your upper range, decrease your upper range.

- Repeat until you are indifferent between the two bets.

- Evaluate the lower range.

- Compare betting real money on the 95% equivalence wheel to betting the real value is within your lower range.

- If you would rather bet on the wheel, decrease your lower range.

- If you would rather bet on your lower range, increase your upper range.

- Repeat until you are indifferent between the two bets.

Guesstimation tips

- Imagine you are betting real money. The more real the bet feels, the more you will engage your brain’s natural ability to manage risk.

- Have someone else ask you to bet each time. We tend to get lazy and quit calibrating too early.

- Practice this skill to get good at it.

Try it out for yourself with our Calibration Training Exercise!

Practice guesstimation

Some people make careers out of being good estimators – we call them bookies, or odds makers. These are professionals that set gambling odds for a living. If they are bad at it, they don’t stay in business very long. How do they get good at estimating and maintain that skill throughout a career? They practice. The first time working with the equivalence bet is painful. So is the second. And the third. If you are attempting to create a 90% estimation interval, you will most likely wind up with an overconfident 70% estimation interval, which will throw off any calculations you are doing. You’ll do this more than once. But it will make you a better guesstimator. These tools require diligence. If you want to be a career entrepreneur, estimation is a critical skill. You can’t manage what you can’t measure.

How you do it

- Try.

- Check yourself against real data.

- Repeat.

Guesstimation tips

- Practice by estimating things you do or see all the time, such as shopping prices, sports scores, and travel time.

- Find a partner to compete against to make it more fun.

Lessons Learned

- Wisdom of the crowds - Form independent guesstimates, then combine them.

- Estimate in ranges - Learn to express your level of uncertainty.

- Fermi decomposition - Break estimates into smaller pieces and estimate them separately.

- Avoid known biases - Account for biases that you can predict and mitigate..

- Equivalence bet - Compare your estimate to a known uncertainty.

- Practice - …makes perfect!

Frequently Asked Questions

What is guesstimation and why does it matter in innovation?

Guesstimation is the practice of turning limited information into useful quantitative estimates when hard data isn’t available. In innovation, we almost always lack complete data — we don’t know our market size, conversion rates, or margins with certainty. Good guesstimation helps us make smarter investment decisions by weighing odds rather than relying on pure gut feelings or blind faith in a project.

How does Fermi Decomposition improve the accuracy of estimates?

Fermi Decomposition breaks a complex, hard-to-estimate variable into smaller, more manageable sub-variables. Instead of guessing total profit directly, we estimate margin, number of customers, price point, and retention rate separately. As product managers, we find this approach dramatically improves accuracy — in one survey, estimation accuracy improved by about 5.5% simply by breaking a building’s height into floors, floor height, and antenna size.

Why should I estimate in ranges instead of single values?

Single-value estimates hide how uncertain we actually are. A ranged estimate — like a 10%–50% conversion rate — communicates our confidence level to decision-makers and shows how much information we actually have. As we run experiments, we can narrow the range (say, to 25%–35%), visibly demonstrating progress even when the average estimate stays the same. This makes guesstimation far more honest and actionable.

What is the equivalence bet technique for calibrating confidence?

The equivalence bet compares your confidence in an estimate against a known probability, like a calibration wheel with a 95% chance of winning. If you’d rather bet money on the wheel than on your estimate, you’re less confident than you claimed — so you widen your range. You adjust until you’re indifferent between the two bets. This leverages our natural ability to compare risks, helping us express confidence more accurately.

How can teams avoid biases when making guesstimations?

We should first use Fermi Decomposition to simplify the problem, then review common cognitive biases like anchoring, framing, and overconfidence. Watch especially for compounding effects — people systematically underestimate exponential growth like interest rates or viral marketing. Instead of estimating the total impact directly, estimate the compounding rate and calculate the result. Also, ensure diverse and independent input to counteract systemic biases across your team.

Comments

Loading comments…

Leave a comment