User Insights in Context: 3 Traps That Mislead Product Teams

Why your averages are lying and your failed experiments might be goldmines

Quick Answer: User insights only drive innovation when interpreted in context — not taken at face value. As product managers, we should avoid three common traps: relying on averages that mask enthusiastic segments, evaluating responses in aggregate instead of by persona, and discarding invalidated experiments that may contain unexpected opportunities. The key is to segment data, align analysis with specific business objectives, and ask open-ended questions that expand our understanding of users beyond narrow hypotheses.

(This is a guest post by Michael Bamberger. He is the co-founder of Alpha UX, a real-time user insights platform for enterprise product teams. I reached out to chat about his approach for generating user insights for new products. You can find him on Twitter or Linkedin.) Seeking out and applying ‘best practices’ is a tried and true strategy. Unfortunately, when trying to apply what has worked well for others, many product teams end up dogmatically following instead of contextualizing for their specific case. It is rare for best practices to be universally applicable in all contexts and scenarios. Rather than focusing on what other people are doing in a vacuum, instead seek to understand and implement the principles and methodologies that led to those outcomes. Generating user insights is no different. There are few, if any, practices that should be applied universally. Each organization - and even each team within - must construct a practice that aligns with their business objectives and target market. What might be a profound insight for one team could be utterly meaningless for another. In my experience, there are three common occurrences that can trip up otherwise diligent product teams. Watch out for these when interpreting insights.

Just your average illusion

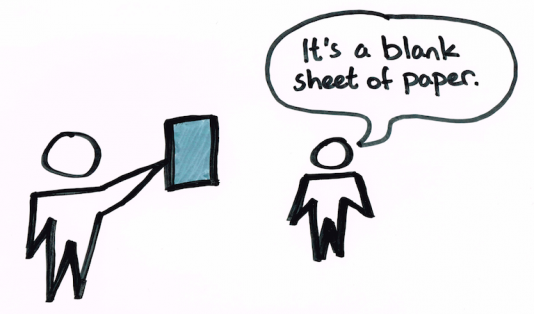

You’ve just run a cohort of users through a simulated prototype followed by a survey asking them a range of questions to measure perception of value. Most of the ratings, from ease-of-use to likelihood-to-try, were asked on a scale of 1 to 10. After compiling all the answers, you find that the average for each was a dull 5, and you’re looking for at least a 7.5 or above. Onto the next prototype variant - this one’s a bust.  (Four cohorts each go through a unique experience with a test variable. In this example, each cohort sees a unique landing page to test how the branding impacts their perception of the app. They then answer the same exact questions at the end so it’s an apples-to-apples comparison.)

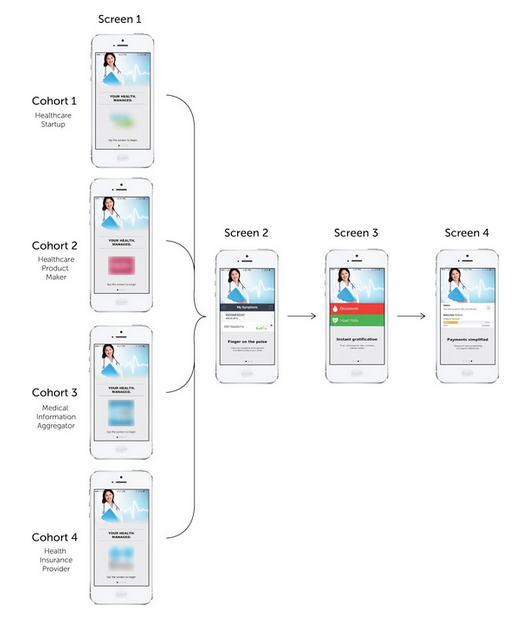

(Four cohorts each go through a unique experience with a test variable. In this example, each cohort sees a unique landing page to test how the branding impacts their perception of the app. They then answer the same exact questions at the end so it’s an apples-to-apples comparison.)  (We ask 10-15 questions that collectively amount to a ‘perception of value.’) Well, hold on. Averages don’t tell the whole story. An average rating of 5 may be a result of all the respondents rating it a 5, or half rating it a 0 and half rating it a 10. With a large media company client, we tested an app idea for sports bars. If we had done nothing more than compute an average of the survey results, we might have believed that the idea was perceived as only being mediocre. However, a closer look at the data showed that one segment of respondents were extremely enthusiastic about the idea. This enthusiastic segment of the population could actually be large enough to meet the client’s business goals, and we would have missed had we simply looked at the average. Don’t overlook the mode, median, and variance, but more importantly, consider your business objectives during analysis. Insights are always dependent upon what is meaningful to you and your business.

(We ask 10-15 questions that collectively amount to a ‘perception of value.’) Well, hold on. Averages don’t tell the whole story. An average rating of 5 may be a result of all the respondents rating it a 5, or half rating it a 0 and half rating it a 10. With a large media company client, we tested an app idea for sports bars. If we had done nothing more than compute an average of the survey results, we might have believed that the idea was perceived as only being mediocre. However, a closer look at the data showed that one segment of respondents were extremely enthusiastic about the idea. This enthusiastic segment of the population could actually be large enough to meet the client’s business goals, and we would have missed had we simply looked at the average. Don’t overlook the mode, median, and variance, but more importantly, consider your business objectives during analysis. Insights are always dependent upon what is meaningful to you and your business.

Data personified

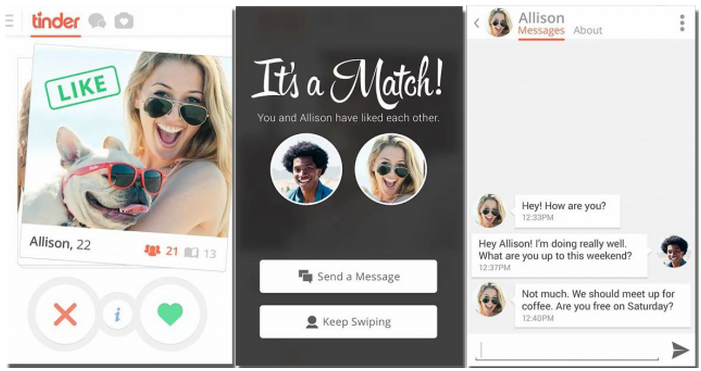

Along the same lines, make sure that you have a clear understanding of the respondent pool. Early on in the experimentation process, you may want to use a broad target audience so that you can narrow down to a persona iteratively. But you’ll need to ask a variety of demographic and behavioral questions so that you can filter and segment answers in order to do so. As you gain a clearer picture of your target personas, make sure to evaluate their responses in context and not in aggregate. For example, we’ve frequently prototyped apps for parents and grandparents, using common design conventions, like Tinder’s swipe. Early in the testing process, the app received overall high ratings for ease-of-use. However, when we zeroed in on respondents aged 55+, the results were not so positive. As it turns out, a number of the interactions and design concepts tested were not intuitive to this particular segment.  (Source: phandroid. Tinder popularized the design convention to swipe right to accept or left to reject, but it’s not ‘intuitive’ for people who have never used Tinder.) Evaluate user insights contextually to define user segments, but also do the reverse. Define user personas and segment their responses for comparison. Often, you’ll find outliers once you take a closer look at the data.

(Source: phandroid. Tinder popularized the design convention to swipe right to accept or left to reject, but it’s not ‘intuitive’ for people who have never used Tinder.) Evaluate user insights contextually to define user segments, but also do the reverse. Define user personas and segment their responses for comparison. Often, you’ll find outliers once you take a closer look at the data.

Finding the top of the mountain

It’s quite common for customer interviews or experiments to lead to invalidated hypotheses. Even if what you’re specifically testing for is invalidated, there can still be a treasure trove of insights and data from those research exercises that might be meaningful. Only pursuing validated hypotheses is in fact not the best practice if the goal is to understand your users. Eric Ries, the author of The Lean Startup, uses a relevant analogy on his blog to describe the fault without only pursuing a validated direction:

Whenever you’re not sure what to do, try something small, at random, and see if that makes things a little bit better. If it does, keep doing more of that, and if it doesn’t, try something else random and start over. Imagine climbing a hill this way; it’d work with your eyes closed. Just keep seeking higher and higher terrain, and rotate a bit whenever you feel yourself going down. But what if you’re climbing a hill that is in front of a mountain? When you get to the top of the hill, there’s no small step you can take that will get you on the right path up the mountain. That’s the local maximum. All optimization techniques get stuck in this position.

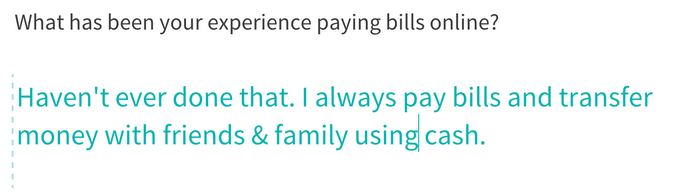

Essentially, constraining your experiments to areas you’ve already been and know severely limits your capacity for learning. Your job as the product owner is to discover the mountain and then figure out how to climb it. In addition to testing new experiment variants, enabling users to provide qualitative feedback is another simple way to capture information outside the inherent scope of your inquiry. With a financial services client, we wanted to learn about users in emerging markets and their experiences paying bills online. In that research, users consistently mentioned in qualitative interviews and open-ended survey questions that they always pay friends and family in cash, and there isn’t a mobile app they rely on for peer-to-peer transactions. Even though the tests were specifically about bill payment, we found an interesting tangent that opened an entirely new product opportunity.  (Enabling open ended feedback can lead to unexpected learnings) While you might not always find what you’re looking for, other problems or value propositions worth exploring may be discovered through the research process. Remember, the goal is to understand your user. Ask open-ended questions that enable you to expand the scope of that understanding. If you’re too focused on testing one specific idea, as opposed to simply discovering and learning about your target users, you could miss out on an opportunity, get biased conclusions, or biased interpretations of the data.

(Enabling open ended feedback can lead to unexpected learnings) While you might not always find what you’re looking for, other problems or value propositions worth exploring may be discovered through the research process. Remember, the goal is to understand your user. Ask open-ended questions that enable you to expand the scope of that understanding. If you’re too focused on testing one specific idea, as opposed to simply discovering and learning about your target users, you could miss out on an opportunity, get biased conclusions, or biased interpretations of the data.

Lessons Learned

Acknowledging the value of experimenting to generate customer insights is among the initial steps toward innovating successfully. Be careful not to blindly follow best practices that have worked elsewhere but that are not tailored to meet your objectives. The only way to effectively scale an experimentation process is to apply sound and consistent reasoning and analysis that takes context into account. --------------------- Bio: Michael Bamberger is the co-founder of Alpha UX, a real-time user insights platform for enterprise product teams. He has been developing digital products for more than 15 years, serving in roles ranging from developer to product manager. He was previously a Vice President at Greenhaven Partners, a private equity firm, where he managed digital strategy and research business units across the portfolio of companies.

Frequently Asked Questions

What are user insights and why do they matter for product teams?

User insights are the learnings we gather from experiments, interviews, and user research that help us understand how people perceive and interact with our products. They matter because they guide product decisions, but as product managers, we need to interpret them in context — what’s meaningful for one team or business objective could be entirely irrelevant for another.

Why is relying on averages dangerous when analyzing user research data?

Averages can mask critical segments within your data. An average score of 5 out of 10 might mean everyone rated it a 5, or it could mean half your respondents rated it a 0 and the other half a 10. That enthusiastic half could represent a viable market. We should also look at mode, median, and variance, and always evaluate results against our specific business objectives.

How do user personas affect the way we interpret user insights?

Evaluating user insights in aggregate without segmenting by persona can lead to misleading conclusions. For example, an app might score high on ease-of-use overall, but a specific demographic like users aged 55+ might struggle with design conventions like Tinder’s swipe. We need to segment responses by persona to uncover these critical differences and design accordingly.

What should product teams do when an experiment invalidates their hypothesis?

Don’t discard everything — invalidated hypotheses can still yield valuable user insights. Qualitative feedback and open-ended questions often surface unexpected opportunities outside your original scope. One financial services experiment about bill payment revealed an unmet need for peer-to-peer mobile payments in emerging markets. The goal is to understand your users broadly, not just validate a single idea.

How do you avoid blindly following best practices in product experimentation?

Rather than copying what worked for other teams, we should focus on understanding the principles and methodologies behind those outcomes and then contextualize them for our specific business objectives and target market. The only way to effectively scale experimentation is to apply consistent reasoning and analysis that accounts for our unique context, audience segments, and goals.

Comments

Loading comments…

Leave a comment